最小化微服务漏洞

最小化微服务漏洞

目录

[toc]

本节实战

| 实战名称 |

|---|

| 💘 案例:设置容器以普通用户运行-2023.5.29(测试成功) |

| 💘 案例:避免使用特权容器,选择使用capabilities-2023.5.30(测试成功) |

| 💘 案例:只读挂载容器文件系统-2023.5.30(测试成功)== |

| 案例1:禁止创建特权模式的Pod |

| 示例2:禁止没指定普通用户运行的容器 |

| 💘 实战:部署Gatekeeper-2023.6.1(测试成功) |

| 💘 案例1:禁止容器启用特权-2023.6.1(测试成功) |

| 💘 案例2:只允许使用特定的镜像仓库-2023.6.1(测试成功) |

| 💘 实战:gVisor与Docker集成-2023.6.2(测试成功) |

| 💘 实战:gVisor与Containerd集成-2023.6.2(测试成功) |

| 💘 实战:K8s使用gVisor运行容器-2023.6.3(测试成功) |

1、Pod安全上下文

**安全上下文(Security Context):**K8s对Pod和容器提供的安全机制,可以设置Pod特权和访问控制。

安全上下文限制��维度:

• 自主访问控制(Discretionary Access Control):基于用户ID(UID)和组ID(GID),来判定对对象(例如文件)的访问权限。

• 安全性增强的 Linux(SELinux): 为对象赋予安全性标签。

• 以特权模式或者非特权模式运行。

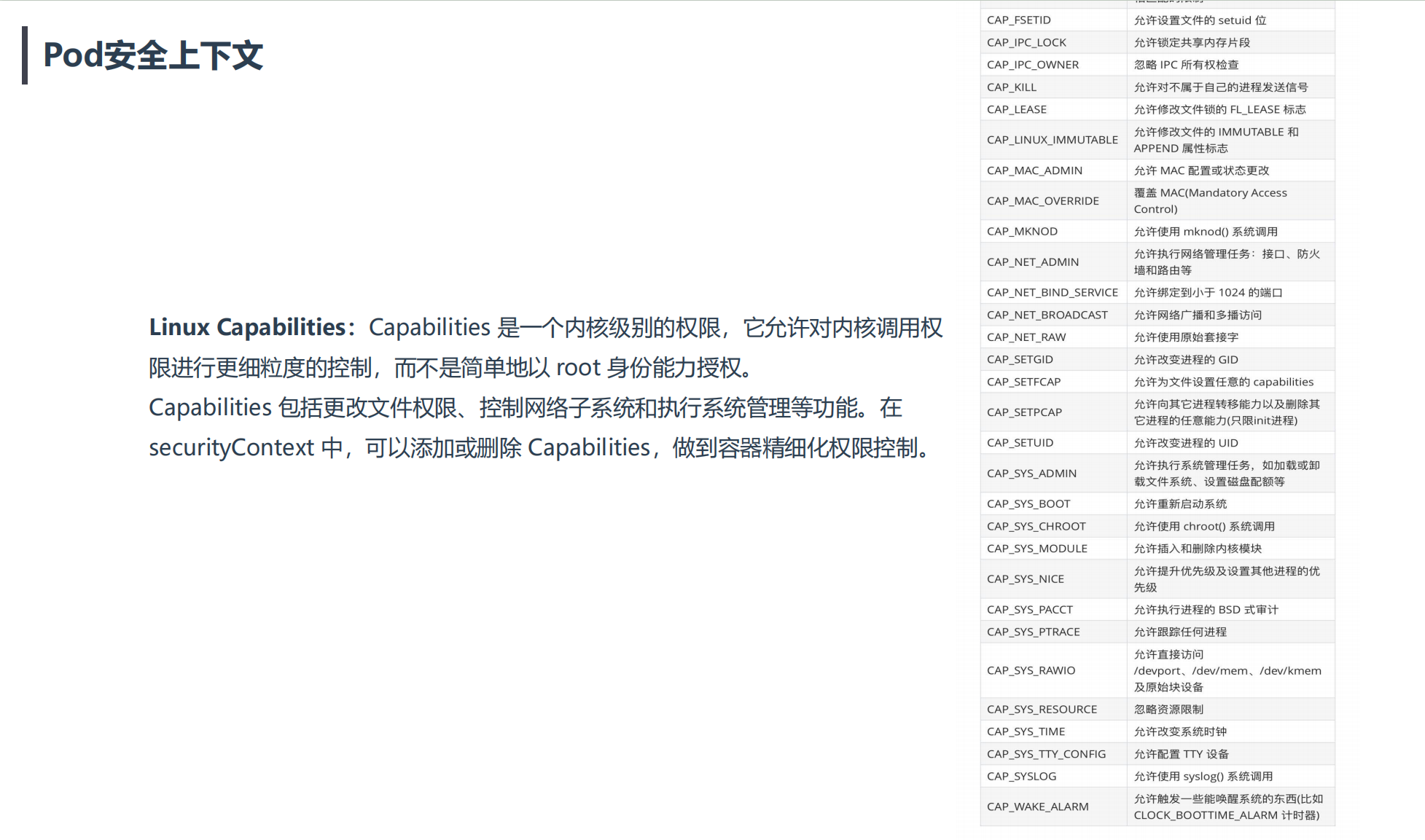

• Linux Capabilities: 为进程赋予 root 用户的部分特权而非全部特权。

• AppArmor:定义Pod使用AppArmor限制容器对资源访问限制

• Seccomp:定义Pod使用Seccomp限制容器进程的系统调用

• AllowPrivilegeEscalation: 禁止容器中进程(通过 SetUID 或 SetGID 文件模式)获得特权提升。当容器以特权模式运行或者具有CAP_SYS_ADMIN能力时,AllowPrivilegeEscalation总为True。

• readOnlyRootFilesystem:以只读方式加载容器的根文件系统。

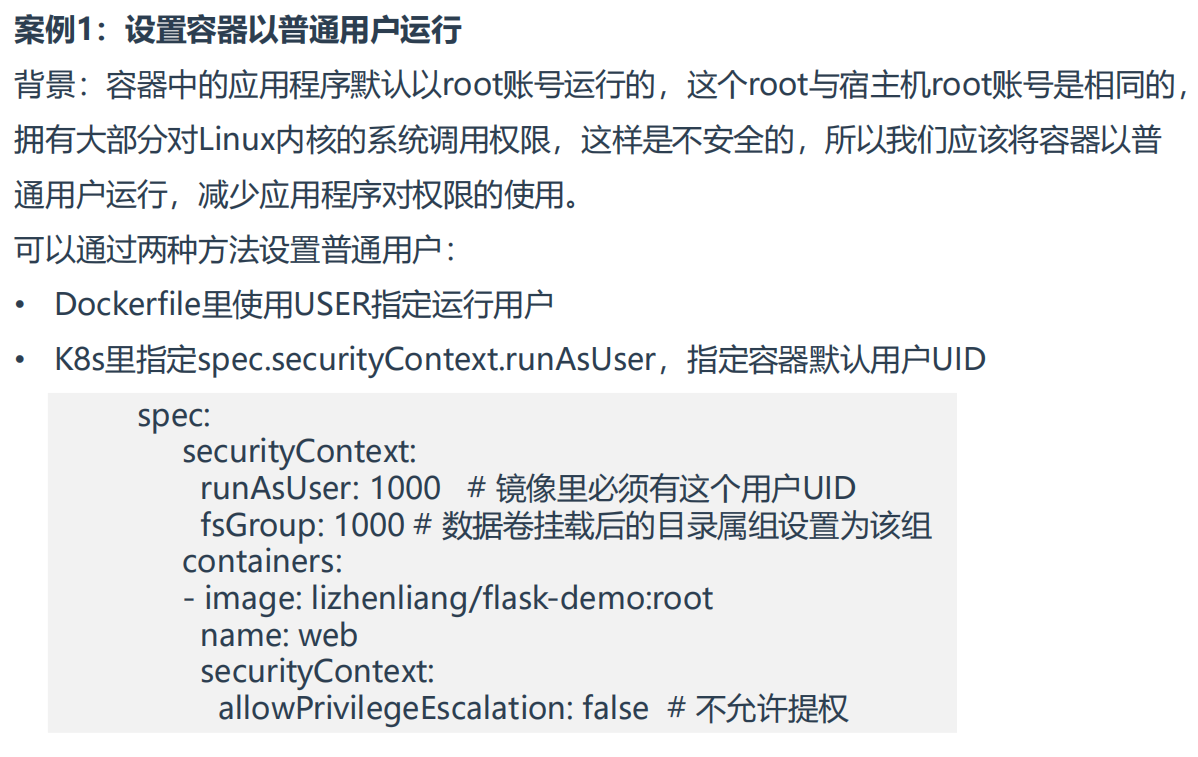

案例1:设置容器以普通用户运行

==💘 案例:设置容器以普通用户运行-2023.5.29(测试成功)==

- 实验环境

实�验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

- 实验软件

链接:https://pan.baidu.com/s/1mmw8OulDE98CqIy8oMbaOg?pwd=0820

提取码:0820

2023.5.29-securityContext-runAsUser-code

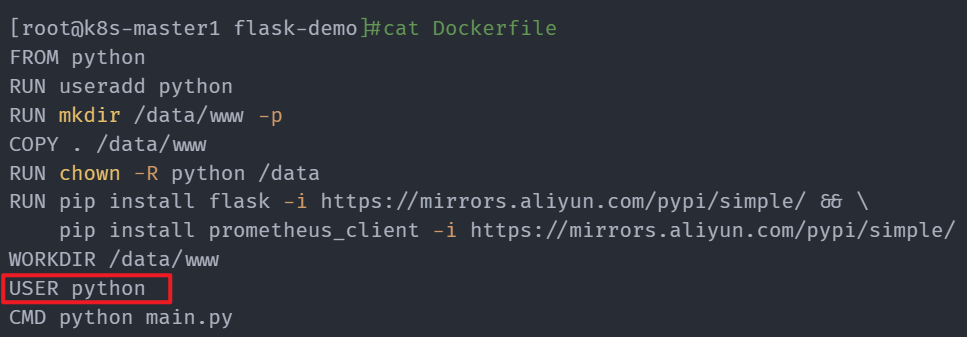

方法1:Dockerfile里使用USER指定运行用户

- 上传软件并解压

[root@k8s-master1 ~]#ll -h flask-demo.zip

-rw-r--r-- 1 root root 1.3K May 29 06:33 flask-demo.zip

[root@k8s-master1 ~]#unzip flask-demo.zip

Archive: flask-demo.zip

creating: flask-demo/

creating: flask-demo/templates/

inflating: flask-demo/templates/index.html

inflating: flask-demo/main.py

inflating: flask-demo/Dockerfile

[root@k8s-master1 ~]#cd flask-demo/

[root@k8s-master1 flask-demo]#ls

Dockerfile main.py templates

- 查看相关文件内容

[root@k8s-master1 flask-demo]#pwd

/root/flask-demo

[root@k8s-master1 flask-demo]#ls

Dockerfile main.py templates

[root@k8s-master1 flask-demo]#cat templates/index.html #将要渲染的文件

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>首页</title>

</head>

<body>

<h1>Hello Python!</h1>

</body>

</html>

[root@k8s-master1 flask-demo]#cat main.py

from flask import Flask,render_template

app = Flask(__name__)

@app.route('/')

def index():

return render_template("index.html")

if __name__ == "__main__":

app.run(host="0.0.0.0",port=8080) #程序用的是8080端口

[root@k8s-master1 flask-demo]#cat Dockerfile

FROM python

RUN useradd python

RUN mkdir /data/www -p

COPY . /data/www

RUN chown -R python /data

RUN pip install flask -i https://mirrors.aliyun.com/pypi/simple/ && \

pip install prometheus_client -i https://mirrors.aliyun.com/pypi/simple/

WORKDIR /data/www

USER python

CMD python main.py

- 构建镜像

[root@docker flask-demo]#docker build -t flask-demo:v1 .

[root@docker flask-demo]#docker images|grep flask

flask-demo v1 a9cabb6241ed 22 seconds ago 937MB

- 启动一个容器

[root@docker flask-demo]#docker run -d --name demo-v1 -p 8080:8080 flask-demo:v1

f3d7173e84ad6242d3c037e33a2ff0d654550bcac970534777188092e173dc6e

[root@docker flask-demo]#docker ps -l

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

f3d7173e84ad flask-demo:v1 "/bin/sh -c 'python …" 41 seconds ago Up 40 seconds 0.0.0.0:8080->8080/tcp, :::8080->8080/tcp demo-v1

- 进入到容器里,可以看到运行次程序的容器用户是python:

[root@docker flask-demo]#docker exec -it demo-v1 bash

python@f3d7173e84ad:/data/www$ id

uid=1000(python) gid=1000(python) groups=1000(python)

python@f3d7173e84ad:/data/www$ id python

uid=1000(python) gid=1000(python) groups=1000(python)

python@f3d7173e84ad:/data/www$ ps -ef

UID PID PPID C STIME TTY TIME CMD

python 1 0 0 04:30 ? 00:00:00 /bin/sh -c python main.py

python 7 1 0 04:30 ? 00:00:00 python main.py

#在宿主机上查看容器进程,也是uid为1000的普通用户:

[root@docker flask-demo]#ps -ef|grep main

1000 80085 80065 0 12:30 ? 00:00:00 /bin/sh -c python main.py

1000 80114 80085 0 12:30 ? 00:00:00 python main.py

- 如果我们把Dockerfile里的

USER python去掉,我们再启动一个容器看下效果,容器默认就是以root身份来运行程序的

[root@docker flask-demo]#vim Dockerfile

FROM python

RUN useradd python

RUN mkdir /data/www -p

COPY . /data/www

RUN chown -R python /data

RUN pip install flask -i https://mirrors.aliyun.com/pypi/simple/ && \

pip install prometheus_client -i https://mirrors.aliyun.com/pypi/simple/

WORKDIR /data/www

#USER python

CMD python main.py

# 构建

[root@docker flask-demo]#docker build -t flask-demo:v2 .

#启动容器

[root@docker flask-demo]#docker run -d --name demo-v2 -p 8081:8080 flask-demo:v2

ce527b2bb4b59b28a3b5bb6fb50c4de4d0a5183eaafdb5631d866025af01a319

[root@docker flask-demo]#docker ps -l

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

ce527b2bb4b5 flask-demo:v2 "/bin/sh -c 'python …" 4 seconds ago Up 3 seconds 0.0.0.0:8081->8080/tcp, :::8081->8080/tcp demo-v2

#查看容器内程序用户:

[root@docker flask-demo]#docker exec -it demo-v2 bash

root@ce527b2bb4b5:/data/www# id

uid=0(root) gid=0(root) groups=0(root)

root@ce527b2bb4b5:/data/www# id python

uid=1000(python) gid=1000(python) groups=1000(python)

root@ce527b2bb4b5:/data/www# ps -ef

UID PID PPID C STIME TTY TIME CMD

root 1 0 0 04:41 ? 00:00:00 /bin/sh -c python main.py

root 7 1 0 04:41 ? 00:00:00 python main.py

#查看宿主机次容器进程用户

[root@docker flask-demo]#ps -ef|grep main

1000 80085 80065 0 12:30 ? 00:00:00 /bin/sh -c python main.py

1000 80114 80085 0 12:30 ? 00:00:00 python main.py

root 80866 80847 0 12:41 ? 00:00:00 /bin/sh -c python main.py

root 80896 80866 0 12:41 ? 00:00:00 python main.py

符合预期。

- 另外,docker run命令也是可以指定容器内运行程序用户的,但是次用户必须是镜像内之前已存在的用户才行

[root@docker flask-demo]#docker run -u xyy -d --name demo-test -p 8082:8080 flask-demo:v2

\f7cd85c396fc769982fab012d32aa8cf979c49078503328c522c34c8b6f9f7fc

docker: Error response from daemon: unable to find user xyy: no matching entries in passwd file.

[root@docker flask-demo]#docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

f7cd85c396fc flask-demo:v2 "/bin/sh -c 'python …" 54 seconds ago Created 0.0.0.0:8082->8080/tcp, :::8082->8080/tcp demo-test

ce527b2bb4b5 flask-demo:v2 "/bin/sh -c 'python …" 4 minutes ago Up 4 minutes 0.0.0.0:8081->8080/tcp, :::8081->8080/tcp demo-v2

f3d7173e84ad flask-demo:v1 "/bin/sh -c 'python …" 15 minutes ago Up 15 minutes 0.0.0.0:8080->8080/tcp, :::8080->8080/tcp demo-v1

d83dbc25e4da kindest/node:v1.25.3 "/usr/local/bin/entr…" 2 months ago Up 2 months demo-worker2

8e32056c89da kindest/node:v1.25.3 "/usr/local/bin/entr…" 2 months ago Up 2 months demo-worker

bf4947c5578a kindest/node:v1.25.3 "/usr/local/bin/entr…" 2 months ago Up 2 months 127.0.0.1:39911->6443/tcp demo-control-plane

测试结束。😘

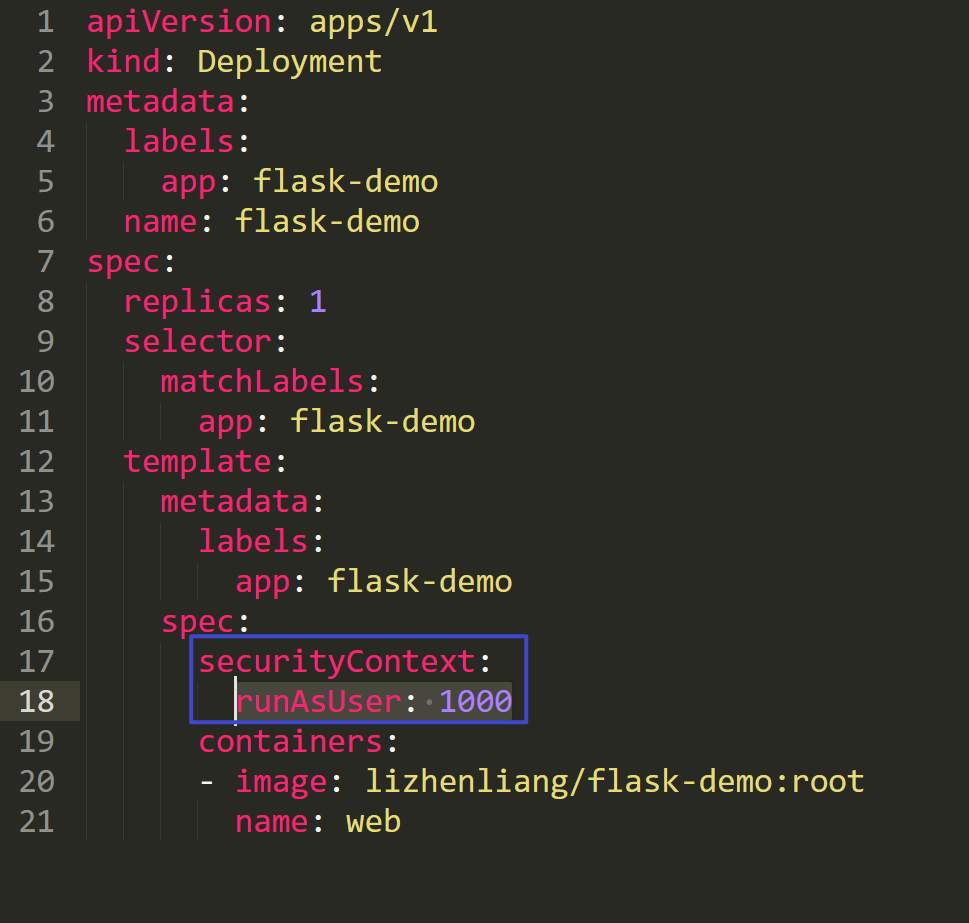

方法2:K8s里指定spec.securityContext.runAsUser,指定容器默认用户UID

- 部署pod

mkdir /root/securityContext-runAsUser

cd /root/securityContext-runAsUser

[root@k8s-master1 securityContext-runAsUser]#kubectl create deployment flask-demo --image=nginx --dry-run=client -oyaml > deployment.yaml

[root@k8s-master1 securityContext-runAsUser]#vim deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: flask-demo

name: flask-demo

spec:

replicas: 1

selector:

matchLabels:

app: flask-demo

template:

metadata:

labels:

app: flask-demo

spec:

securityContext:

runAsUser: 1000

containers:

- image: lizhenliang/flask-demo:root

name: web

说明:

lizhenliang/flask-demo:root #这个镜像标识容器里程序是以root身份运行的;

lizhenliang/flask-demo:noroot #这个镜像标识容器里程序是以1000身份运行的;

部署以上deployment:

[root@k8s-master1 securityContext-runAsUser]#kubectl apply -f deployment.yaml

deployment.apps/flask-demo created

- 查看

[root@k8s-master1 securityContext-runAsUser]#kubectl get po

NAME READY STATUS RESTARTS AGE

flask-demo-6c78dcd8dd-j6s67 1/1 Running 0 82s

[root@k8s-master1 securityContext-runAsUser]#kubectl exec -it flask-demo-6c78dcd8dd-j6s67 -- bash

python@flask-demo-6c78dcd8dd-j6s67:/data/www$ id

uid=1000(python) gid=1000(python) groups=1000(python)

python@flask-demo-6c78dcd8dd-j6s67:/data/www$ ps -ef

UID PID PPID C STIME TTY TIME CMD

python 1 0 0 12:10 ? 00:00:00 /bin/sh -c python main.py

python 7 1 0 12:10 ? 00:00:00 python main.py

python 8 0 0 12:11 pts/0 00:00:00 bash

python 15 8 0 12:11 pts/0 00:00:00 ps -ef

python@flask-demo-6c78dcd8dd-j6s67:/data/www$

可以看到,此时容器是以spec.securityContext.runAsUser指定的用户来启动的,符合预期。

- 如果

spec.securityContext.runAsUser指定一个不存在的用户id,此时会发生什么现象?

[root@k8s-master1 securityContext-runAsUser]#cp deployment.yaml deployment2.yaml

[root@k8s-master1 securityContext-runAsUser]#vim deployment2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: flask-demo

name: flask-demo2

spec:

replicas: 1

selector:

matchLabels:

app: flask-demo

template:

metadata:

labels:

app: flask-demo

spec:

securityContext:

runAsUser: 1001

containers:

- image: lizhenliang/flask-demo:root

name: web

部署:

[root@k8s-master1 securityContext-runAsUser]#kubectl apply -f deployment2.yaml

deployment.apps/flask-demo2 created

查看:

[root@k8s-master1 securityContext-runAsUser]#kubectl get po -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

flask-demo-6c78dcd8dd-j6s67 1/1 Running 0 6m35s 10.244.169.173 k8s-node2 <none> <none>

flask-demo2-5d978567ff-tcqws 1/1 Running 0 18s 10.244.169.174 k8s-node2 <none> <none>

[root@k8s-master1 securityContext-runAsUser]#kubectl exec -it flask-demo2-5d978567ff-tcqws -- bash

I have no name!@flask-demo2-5d978567ff-tcqws:/data/www$

I have no name!@flask-demo2-5d978567ff-tcqws:/data/www$ id

uid=1001 gid=0(root) groups=0(root)

I have no name!@flask-demo2-5d978567ff-tcqws:/data/www$ cat /etc/passwd|grep 1001

I have no name!@flask-demo2-5d978567ff-tcqws:/data/www$ tail -4 /etc/passwd

gnats:x:41:41:Gnats Bug-Reporting System (admin):/var/lib/gnats:/usr/sbin/nologin

nobody:x:65534:65534:nobody:/nonexistent:/usr/sbin/nologin

_apt:x:100:65534::/nonexistent:/usr/sbin/nologin

python:x:1000:1000::/home/python:/bin/sh

I have no name!@flask-demo2-5d978567ff-tcqws:/data/www$

可以看到,如果

spec.securityContext.runAsUser指定一个不存在的用户id,创建的pod不会报错,但容器里主机名称显示为I have no name!,但是系统下依旧会分配一个不存在的uid。

- 再测试下

lizhenliang/flask-demo:noroot镜像

[root@k8s-master1 securityContext-runAsUser]#cp deployment2.yaml deployment3.yaml

[root@k8s-master1 securityContext-runAsUser]#vim deployment3.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: flask-demo

name: flask-demo3

spec:

replicas: 1

selector:

matchLabels:

app: flask-demo

template:

metadata:

labels:

app: flask-demo

spec:

#securityContext:

# runAsUser: 1001

containers:

- image: lizhenliang/flask-demo:noroot

name: web

部署:

[root@k8s-master1 securityContext-runAsUser]#kubectl apply -f deployment3.yaml

deployment.apps/flask-demo3 created

测试:

[root@k8s-master1 securityContext-runAsUser]#kubectl get po

NAME READY STATUS RESTARTS AGE

flask-demo-6c78dcd8dd-j6s67 1/1 Running 0 14m

flask-demo2-5d978567ff-tcqws 1/1 Running 0 8m4s

flask-demo3-5fd4b7787c-cjjct 1/1 Running 0 84s

[root@k8s-master1 securityContext-runAsUser]#kubectl exec -it flask-demo3-5fd4b7787c-cjjct -- bash

python@flask-demo3-5fd4b7787c-cjjct:/data/www$ id

uid=1000(python) gid=1000(python) groups=1000(python)

python@flask-demo3-5fd4b7787c-cjjct:/data/www$ ps -ef

UID PID PPID C STIME TTY TIME CMD

python 1 0 0 12:23 ? 00:00:00 /bin/sh -c python main.py

python 7 1 0 12:23 ? 00:00:00 python main.py

python 8 0 0 12:23 pts/0 00:00:00 bash

python 15 8 0 12:24 pts/0 00:00:00 ps -ef

python@flask-demo3-5fd4b7787c-cjjct:/data/www$

符合预期。

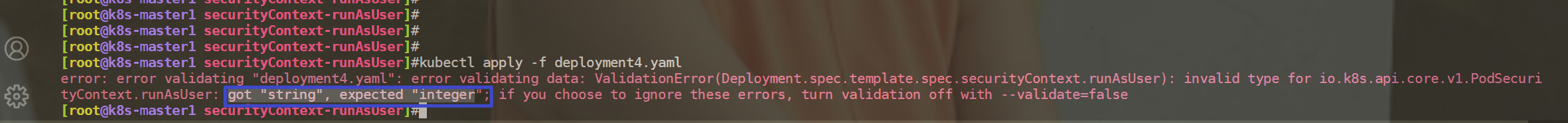

- 再测试:如果

spec.securityContext.runAsUser指定用户名时,此时会发生什么现象?

[root@k8s-master1 securityContext-runAsUser]#cp deployment3.yaml deployment4.yaml

[root@k8s-master1 securityContext-runAsUser]#vim deployment4.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: flask-demo

name: flask-demo4

spec:

replicas: 1

selector:

matchLabels:

app: flask-demo

template:

metadata:

labels:

app: flask-demo

spec:

securityContext:

runAsUser: "python"

containers:

- image: lizhenliang/flask-demo:noroot

name: web

部署时,会直接报错的。

因此:

spec.securityContext.runAsUser这里必须指定为用户uid才行。

测试结束。😘

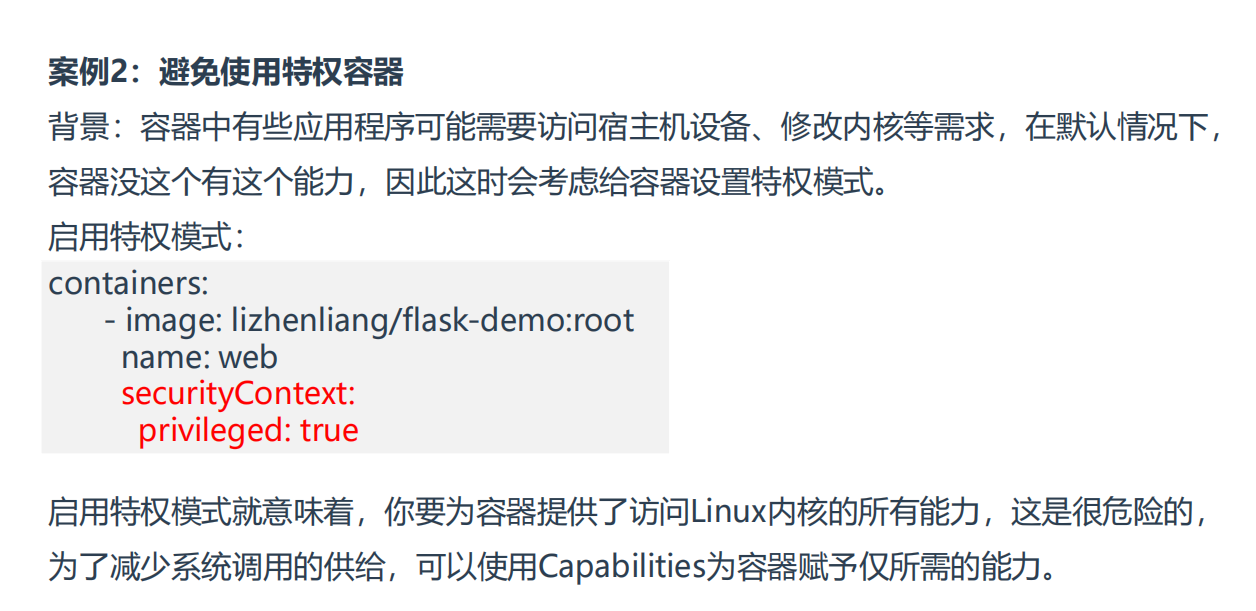

案例2:避免使用特权容器

相当于Capabilities对繁多的linux系统调用方法又做了一个归类。

需要注意:

可以使用capsh命令来查询当前shell支持的一些能力有哪些?

#宿主机上,centos系统

[root@k8s-master1 Capabilities]#capsh --print

Current: = cap_chown,cap_dac_override,cap_dac_read_search,cap_fowner,cap_fsetid,cap_kill,cap_setgid,cap_setuid,cap_setpcap,cap_linux_immutable,cap_net_bind_service,cap_net_broadcast,cap_net_admin,cap_net_raw,cap_ipc_lock,cap_ipc_owner,cap_sys_module,cap_sys_rawio,cap_sys_chroot,cap_sys_ptrace,cap_sys_pacct,cap_sys_admin,cap_sys_boot,cap_sys_nice,cap_sys_resource,cap_sys_time,cap_sys_tty_config,cap_mknod,cap_lease,cap_audit_write,cap_audit_control,cap_setfcap,cap_mac_override,cap_mac_admin,cap_syslog,35,36+ep

Bounding set =cap_chown,cap_dac_override,cap_dac_read_search,cap_fowner,cap_fsetid,cap_kill,cap_setgid,cap_setuid,cap_setpcap,cap_linux_immutable,cap_net_bind_service,cap_net_broadcast,cap_net_admin,cap_net_raw,cap_ipc_lock,cap_ipc_owner,cap_sys_module,cap_sys_rawio,cap_sys_chroot,cap_sys_ptrace,cap_sys_pacct,cap_sys_admin,cap_sys_boot,cap_sys_nice,cap_sys_resource,cap_sys_time,cap_sys_tty_config,cap_mknod,cap_lease,cap_audit_write,cap_audit_control,cap_setfcap,cap_mac_override,cap_mac_admin,cap_syslog,35,36

Securebits: 00/0x0/1'b0

secure-noroot: no (unlocked)

secure-no-suid-fixup: no (unlocked)

secure-keep-caps: no (unlocked)

uid=0(root)

gid=0(root)

groups=0(root)

#我们再来启动一个centos容器,在看下这些能力有哪些?

[root@k8s-master1 Capabilities]#docker run -d centos sleep 24h

Unable to find image 'centos:latest' locally

latest: Pulling from library/centos

a1d0c7532777: Pull complete

Digest: sha256:a27fd8080b517143cbbbab9dfb7c8571c40d67d534bbdee55bd6c473f432b177

Status: Downloaded newer image for centos:latest

e69eddf42c4cc9c44d943786a2978cd55e3bdf68b9de23f6e221a2e44d8f63b0

[root@k8s-master1 Capabilities]#docker exec -it e69eddf42c4cc9c44d943786a2978cd55e3bdf68b9de23f6e221a2e44d8f63b0 bash

[root@e69eddf42c4c /]# capsh --print

Current: = cap_chown,cap_dac_override,cap_fowner,cap_fsetid,cap_kill,cap_setgid,cap_setuid,cap_setpcap,cap_net_bind_service,cap_net_raw,cap_sys_chroot,cap_mknod,cap_audit_write,cap_setfcap+eip

Bounding set =cap_chown,cap_dac_override,cap_fowner,cap_fsetid,cap_kill,cap_setgid,cap_setuid,cap_setpcap,cap_net_bind_service,cap_net_raw,cap_sys_chroot,cap_mknod,cap_audit_write,cap_setfcap

Ambient set =

Securebits: 00/0x0/1'b0

secure-noroot: no (unlocked)

secure-no-suid-fixup: no (unlocked)

secure-keep-caps: no (unlocked)

secure-no-ambient-raise: no (unlocked)

uid=0(root)

gid=0(root)

groups=

[root@e69eddf42c4c /]#

#可以看到,启动的centos容器能力明显比宿主机上能力少好多。

==💘 案例:避免使用特权容器,选择使用capabilities-2023.5.30(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚�机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

- 实验软件

无。

- docker命令行参数里也是具�有这个特权参数的

[root@k8s-master1 ~]#docker run --help|grep privi

--privileged

- 之前也说过,即使是用root用户启动的容器进程,这个root用户具有的能力和宿主机的root能力还是差不少呢,因为处于安全考虑,容器引擎对这个做了一定的限制。

例如:我们在容器里,即使是root用户,也是无法使用mount命令的。

[root@k8s-master1 ~]#kubectl get po

NAME READY STATUS RESTARTS AGE

busybox 1/1 Running 13 8d

[root@k8s-master1 ~]#kubectl exec -it busybox -- sh

/ # mount -t tmpfs /tmp /tmp

mount: permission denied (are you root?)

/ # id

uid=0(root) gid=0(root) groups=10(wheel)

/ #

#当然宿主机里的root用户时可以正常使用mount命令的:

[root@k8s-master1 ~]#ls /tmp/

2023.5.23-code 2023.5.23-code.tar.gz vmware-root_5805-1681267545

[root@k8s-master1 ~]#mount -t tmpfs /tmp /tmp

[root@k8s-master1 ~]#df -hT|grep tmp

/tmp tmpfs 910M 0 910M 0% /tmp

- 开启特权功能,进行测试

[root@k8s-master1 ~]#mkdir Capabilities

[root@k8s-master1 ~]#cd Capabilities/

[root@k8s-master1 Capabilities]#vim pod1.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-sc1

spec:

containers:

- image: busybox

name: web

command:

- sleep

- 24h

securityContext:

privileged: true

#部署:

[root@k8s-master1 Capabilities]#kubectl apply -f pod1.yaml

pod/pod-sc1 created

#测试:

[root@k8s-master1 Capabilities]#kubectl get po

NAME READY STATUS RESTARTS AGE

pod-sc1 1/1 Running 0 61s

[root@k8s-master1 Capabilities]#kubectl exec -it pod-sc1 -- sh

/ #

/ # mount -t tmpfs /tmp /tmp

/ # id

uid=0(root) gid=0(root) groups=10(wheel)

/ # df -hT|grep tmp

tmpfs tmpfs 64.0M 0 64.0M 0% /dev

tmpfs tmpfs 909.8M 0 909.8M 0% /sys/fs/cgroup

shm tmpfs 64.0M 0 64.0M 0% /dev/shm

tmpfs tmpfs 909.8M 12.0K 909.8M 0% /var/run/secrets/kubernetes.io/serviceaccount

/tmp tmpfs 909.8M 0 909.8M 0% /tmp

/ # ls /tmp/

/ #

可以看到,开启了特权功能后,容器就可以正常使用mount命令来挂载文件系统了。

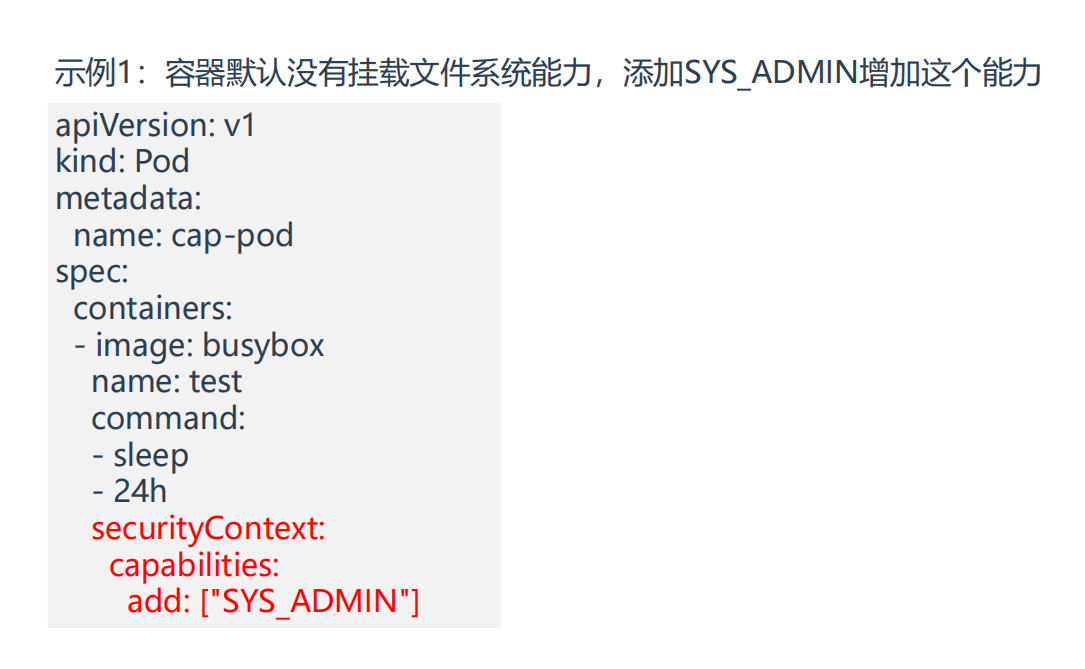

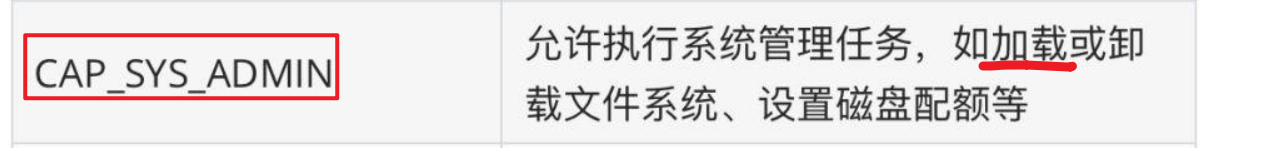

- 现在,我们来避免使用特权容器,选择使用

SYS_ADMIN这个capabilities来解决以上这个问题

SYS_ADMIN能力作用如下:

部署下pod:

[root@k8s-master1 Capabilities]#cp pod1.yaml pod2.yaml

[root@k8s-master1 Capabilities]#vim pod2.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-sc2

spec:

containers:

- image: busybox

name: web

command:

- sleep

- 24h

securityContext:

capabilities:

add: ["SYS_ADMIN"]

#这里需要注意的是:这里写 "SYS_ADMIN"或者 "CAP_SYS_ADMIN" 都是可以的,即:CAP关键字是可以省略的。

#部署:

[root@k8s-master1 Capabilities]#kubectl apply -f pod2.yaml

pod/pod-sc2 created

#测试:

[root@k8s-master1 Capabilities]#kubectl get po

NAME READY STATUS RESTARTS AGE

pod-sc1 1/1 Running 0 9m9s

pod-sc2 1/1 Running 0 36s

[root@k8s-master1 Capabilities]#kubectl exec -it pod-sc2 -- sh

/ #

/ # mount -t tmpfs /tmp /tmp

/ # id

uid=0(root) gid=0(root) groups=10(wheel)

/ # df -hT|grep tmp

/tmp tmpfs 909.8M 0 909.8M 0% /tmp

/ #

此时,容器配置了SYS_ADMIN这个capabilities后,也具有了mount权限了,符合预期。😘

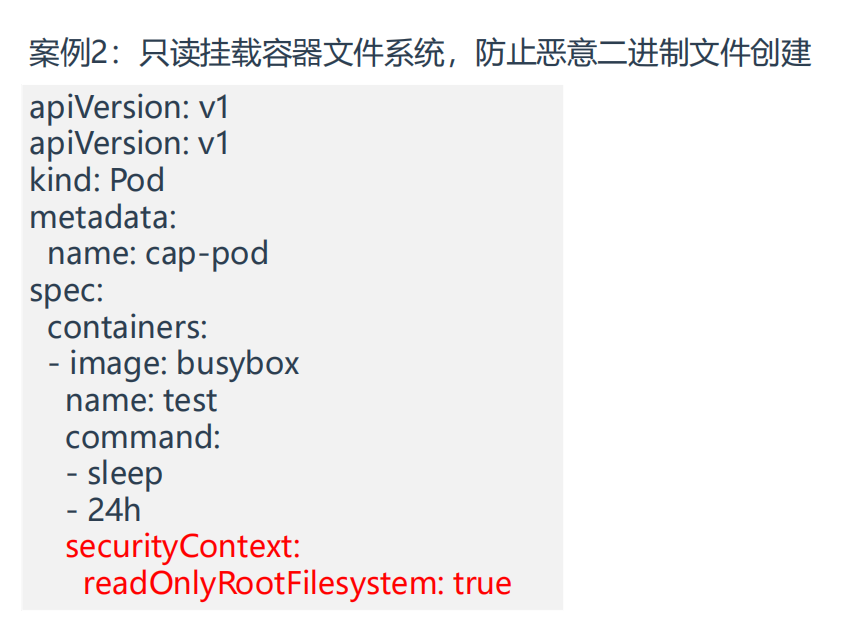

案例3:只读挂载容器文件系统

==💘 案例:只读挂载容器文件系统-2023.5.30(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

- 实验软件

无。

- 默认创建的pod,是可以在容器里随意创建文件的

[root@k8s-master1 Capabilities]#kubectl exec -it pod-sc2 -- sh

/ #

/ # touch 1

/ # ls

1 bin dev etc home lib lib64 proc root sys tmp usr var

/ #

- 但是,处于安全需求,我们不希望程序对容器里的任何文件进行更改,此时该如何解决呢?

就需要用到只读挂载容器文件系统功能了,接下来我们测试下。

[root@k8s-master1 Capabilities]#cp pod2.yaml pod3.yaml

[root@k8s-master1 Capabilities]#vim pod3.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-sc3

spec:

containers:

- image: busybox

name: web

command:

- sleep

- 24h

securityContext:

readOnlyRootFilesystem: true

#部署:

[root@k8s-master1 Capabilities]#kubectl apply -f pod3.yaml

pod/pod-sc3 created

#测试:

[root@k8s-master1 Capabilities]#kubectl get po |grep pod-sc

pod-sc1 1/1 Running 0 17m

pod-sc2 1/1 Running 0 9m5s

pod-sc3 1/1 Running 0 52s

[root@k8s-master1 Capabilities]#kubectl exec -it pod-sc3 -- sh

/ #

/ # ls

bin dev etc home lib lib64 proc root sys tmp usr var

/ # touch a

touch: a: Read-only file system

/ # mkdir a

mkdir: can't create directory 'a': Read-only file system

/ #

pod里配置了只读挂载容器文件系统功能后,此时容器内就无法创建任何文件了,也无法修改任何文件,以上问题需求已实现,测试结束。😘

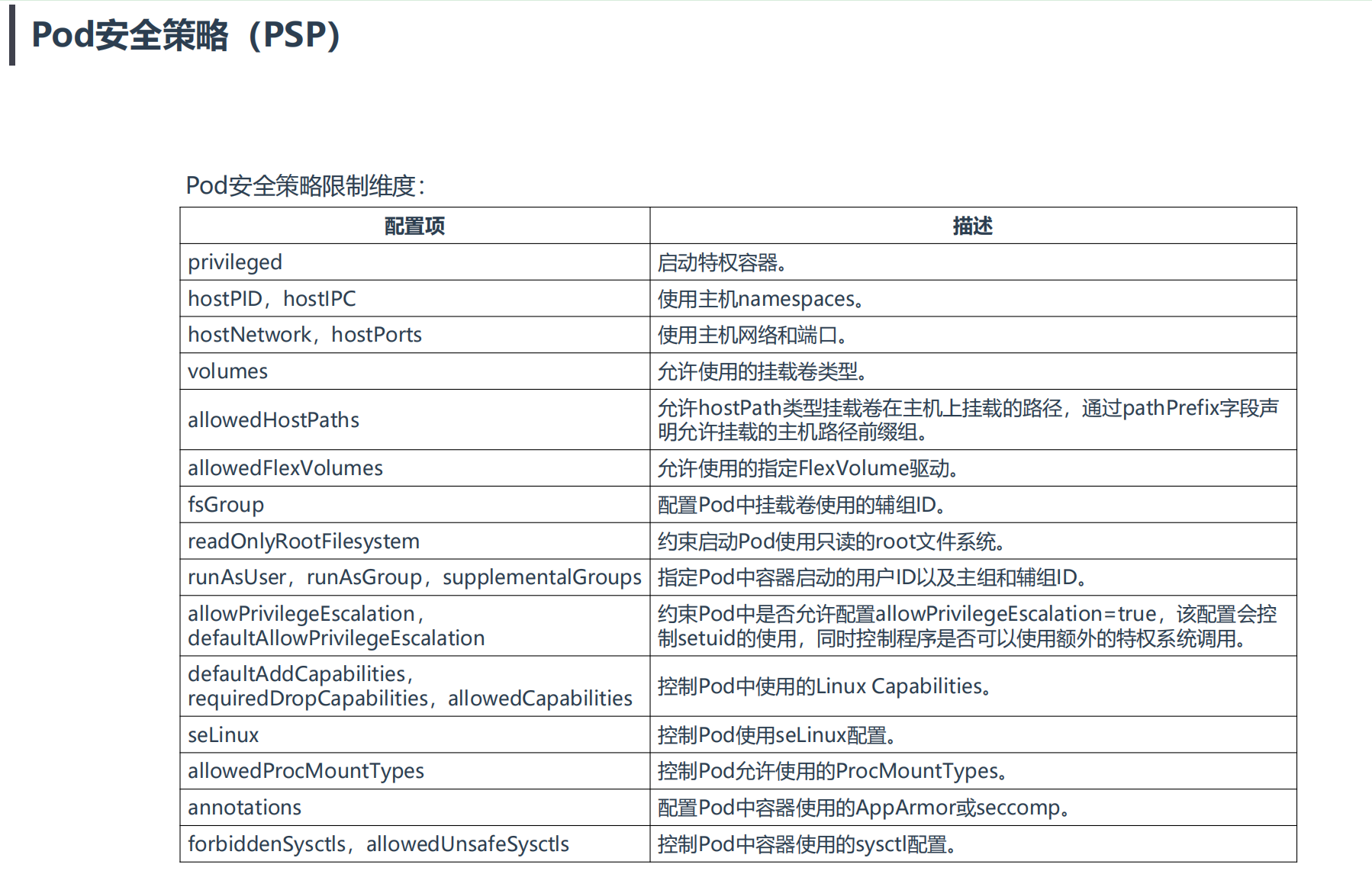

2、Pod安全策略(PSP)

**PodSecurityPolicy(简称PSP):**Kubernetes中Pod部署时重要的安全校验手段,能够有效地约束应用运行时行为安全。

使用PSP对象定义一组Pod在运行时必须遵循的条件及相关字段的默认值,只有Pod满足这些条件才会被K8s接受。

意一步不满足都会拒绝部署。因此,需要实施需要有这几点:

• 创建SA服务账号

• 该SA需要具备创建对应资源权限,例如创建Pod、Deployment

• SA使用PSP资源权限:创建Role,使用PSP资源权限,再将SA绑定Role

==💘 实战:Pod安全策略-2023.5.31(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

- 实验软件

链接:https://pan.baidu.com/s/14gKA3TVzy9wQLk2aCCKw_g?pwd=0820

提取码:0820

2023.5.31-psp-code

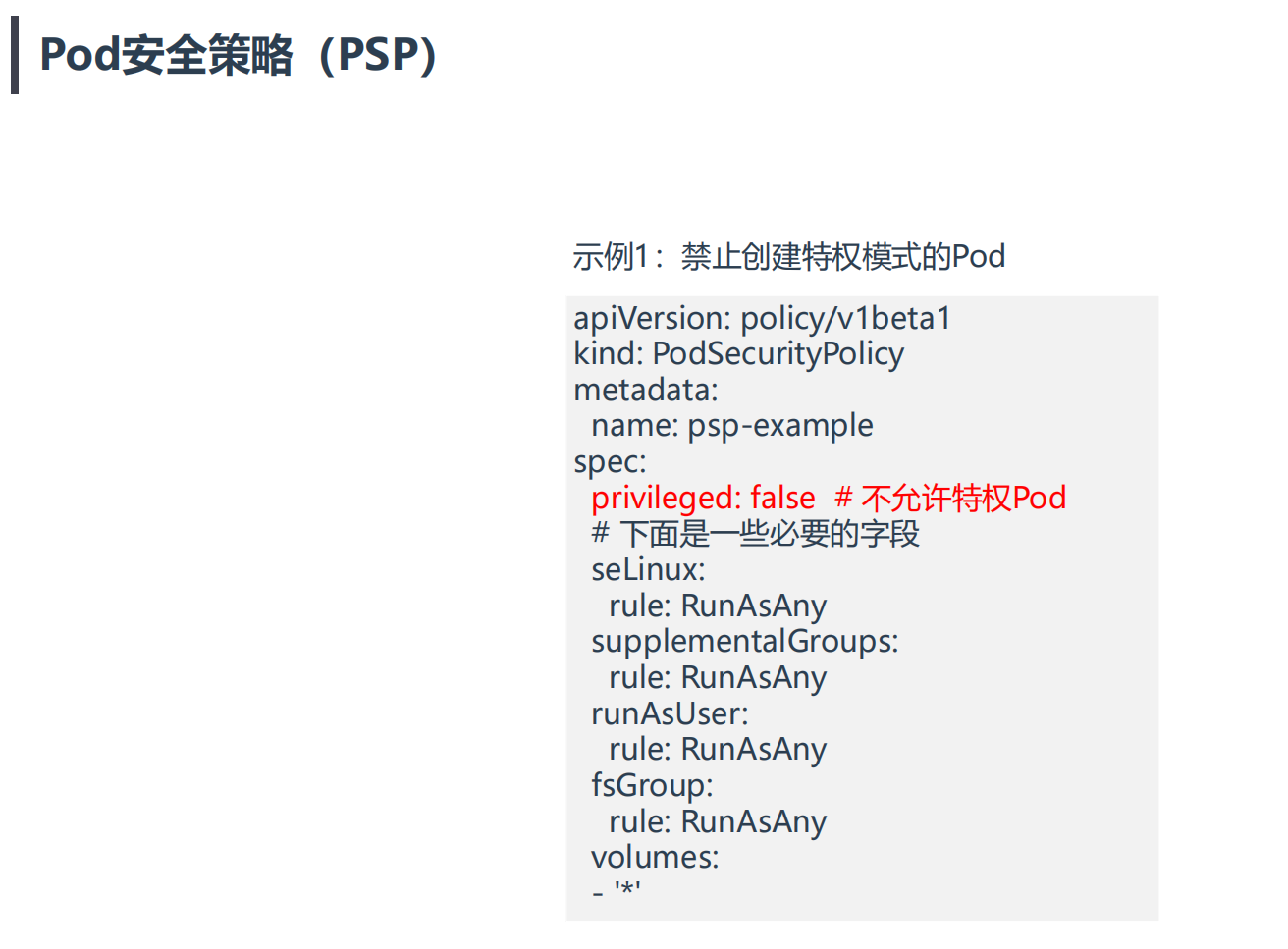

案例1:禁止创建特权模式的Pod

示例1:禁止创建特权模式的Pod

# 创建SA

kubectl create serviceaccount aliang6

# 将SA绑定到系统内置Role

kubectl create rolebinding aliang6 --clusterrole=edit --serviceaccount=default:aliang6

# 创建使用PSP权限的Role

kubectl create role psp:unprivileged --verb=use --resource=podsecuritypolicy --resource-name=psp-example

# 将SA绑定到Role

kubectl create rolebinding aliang:psp:unprivileged --role=psp:unprivileged --serviceaccount=default:aliang6

1.k8s集群启用Pod安全策略

Pod安全策略实现为一个准入控制器,默认没有启用,当启用后会强制实施Pod安全策略,没有满足的Pod将无法创建。因此,建议在启用PSP之前先添加策略并对其授权。

PodSecurityPolicy

[root@k8s-master1 ~]#vim /etc/kubernetes/manifests/kube-apiserver.yaml

将

- --enable-admission-plugins=NodeRestriction

替换为

- --enable-admission-plugins=NodeRestriction,PodSecurityPolicy

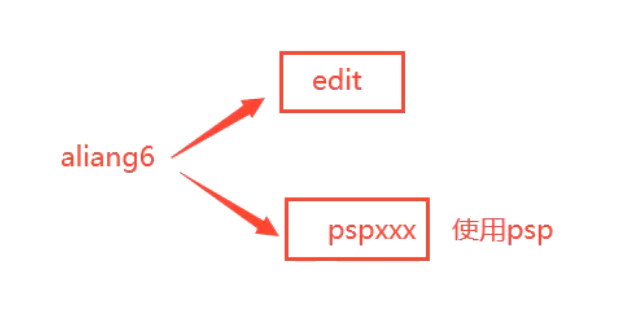

2.创建sa,并将SA绑定到系统内置Role

[root@k8s-master1 ~]#kubectl create serviceaccount aliang6^C

[root@k8s-master1 ~]#mkdir psp

[root@k8s-master1 ~]#cd psp/

[root@k8s-master1 psp]#kubectl create serviceaccount aliang6

serviceaccount/aliang6 created

[root@k8s-master1 psp]#kubectl create rolebinding aliang6 --clusterrole=edit --serviceaccount=default:aliang6

rolebinding.rbac.authorization.k8s.io/aliang6 created

#说明:edit这个clusterrole基本具有很多权限,除了自己不能修改权限外。

[root@k8s-master1 psp]#kubectl get clusterrole|grep -v system:

NAME CREATED AT

admin 2022-10-22T02:34:47Z

calico-kube-controllers 2022-10-22T02:41:12Z

calico-node 2022-10-22T02:41:12Z

cluster-admin 2022-10-22T02:34:47Z

edit 2022-10-22T02:34:47Z

ingress-nginx 2022-11-29T11:28:49Z

kubeadm:get-nodes 2022-10-22T02:34:48Z

kubernetes-dashboard 2022-10-22T02:42:46Z

view 2022-10-22T02:34:47Z

3.配置下psp策略

[root@k8s-master1 psp]#vim psp.yaml

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp-example

spec:

privileged: false # 不允许特权Pod

# 下面是一些必要的字段

seLinux:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

runAsUser:

rule: RunAsAny

fsGroup:

rule: RunAsAny

volumes:

- '*'

#部署:

[root@k8s-master1 psp]#kubectl apply -f psp.yaml

podsecuritypolicy.policy/psp-example created

4.创建资源测试

这创建deployment测试下:

[root@k8s-master1 psp]#kubectl --as=system:serviceaccount:default:aliang6 create deployment web --image=nginx

deployment.apps/web created

[root@k8s-master1 psp]#kubectl get deploy

NAME READY UP-TO-DATE AVAILABLE AGE

web 0/1 0 0 6s

[root@k8s-master1 psp]#kubectl get pod|grep web

#无输出

可以看到,Deployment资源被创建了,但是pod没有被创建成功。

再创建pod测试下:

[root@k8s-master1 psp]#kubectl --as=system:serviceaccount:default:aliang6 run web --image=nginx

Error from server (Forbidden): pods "web" is forbidden: PodSecurityPolicy: unable to admit pod: []

能够看到,创建deployment和pod资源均失败。这是因为我们还需要对aliang6这个sa赋予访问psp资源的权限才行。

5.创建使用PSP权限的Role,并将SA绑定到Role

# 创建使用PSP权限的Role

[root@k8s-master1 psp]#kubectl create role psp:unprivileged --verb=use --resource=podsecuritypolicy --resource-name=psp-example

role.rbac.authorization.k8s.io/psp:unprivileged created

# 将SA绑定到Role

[root@k8s-master1 psp]#kubectl create rolebinding aliang6:psp:unprivileged --role=psp:unprivileged --serviceaccount=default:aliang6

rolebinding.rbac.authorization.k8s.io/aliang6:psp:unprivileged created

6.再一次进行测试

创建具有特权权限pod测试:

[root@k8s-master1 psp]#kubectl run pod-psp --image=busybox --dry-run=client -oyaml > pod.yaml

[root@k8s-master1 psp]#vim pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-psp

spec:

containers:

- image: busybox

name: pod-psp

command:

- sleep

- 24h

securityContext:

privileged: true

#部署

[root@k8s-master1 psp]#kubectl --as=system:serviceaccount:default:aliang6 apply -f pod.yaml

Error from server (Forbidden): error when creating "pod.yaml": pods "pod-psp" is forbidden: PodSecurityPolicy: unable to admit pod: [spec.containers[0].securityContext.privileged: Invalid value: true: Privileged containers are not allowed]

可以看到,psp策略起作用了,禁止pod的创建。

此时,将pod里面的特权给禁用掉,再次创建,观察现象。

[root@k8s-master1 psp]#vim pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-psp

spec:

containers:

- image: busybox

name: pod-psp

command:

- sleep

- 24h

#部署

[root@k8s-master1 psp]#kubectl --as=system:serviceaccount:default:aliang6 apply -f pod.yaml

pod/pod-psp created

[root@k8s-master1 psp]#kubectl get po|grep pod-psp

pod-psp 1/1 Running 0 19s

此时,未启用特权的pod是可以被成功创建的。

此时,利用再创建下pod,deployment看下现象。

#创建pod

[root@k8s-master1 psp]#kubectl --as=system:serviceaccount:default:aliang6 run web10 --image=nginx

pod/web10 created

[root@k8s-master1 psp]#kubectl get po|grep web10

web10 1/1 Running 0 13s

#创建deployment

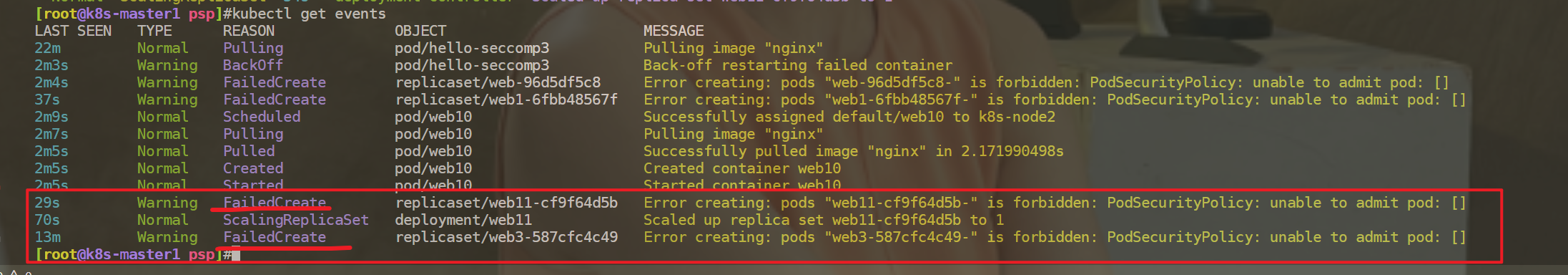

[root@k8s-master1 psp]#kubectl --as=system:serviceaccount:default:aliang6 create deployment web11 --image=nginx

deployment.apps/web11 created

[root@k8s-master1 psp]#kubectl get deployment |grep web11

web11 0/1 0 0 38s

[root@k8s-master1 psp]#kubectl get events

通过查看events日志,可以发现创建deployment报错的原因。

Repliaset是没这个权限,replicaset使用的是默认这个sa账号的权限。

[root@k8s-master1 psp]#kubectl get sa

NAME SECRETS AGE

aliang6 1 5h15m

default 1 221d

现在要做的就是让这个默认sa具有访问psp权限就可以了。

有2种方法:

创建deploy时指定默认的sa为aliang账号;

用命令给默认sa赋予访问psp权限;

我们来使用第二种方法:

[root@k8s-master1 psp]#kubectl create rolebinding default:psp:unprivileged --role=psp:unprivileged --serviceaccount=default:default

rolebinding.rbac.authorization.k8s.io/default:psp:unprivileged created

配置完成后,查看:

[root@k8s-master1 psp]#kubectl --as=system:serviceaccount:default:aliang6 create deployment web12 --image=nginx

deployment.apps/web12 created

[root@k8s-master1 psp]#kubectl get deployment|grep web12

web12 1/1 1 1 11s

[root@k8s-master1 psp]#kubectl get po|grep web12

web12-7b88cfd55f-9z5hz 1/1 Running 0 20s

此时,就可以正常部署deployment了。

测试结束。😘

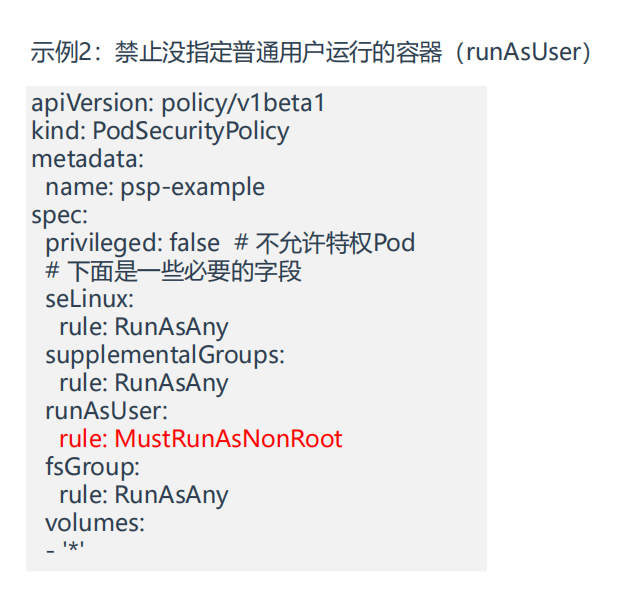

示例2:禁止没指定普通用户运行的容器

- 我们在上面psp策略基础上重新配置下策略

[root@k8s-master1 psp]#vim psp.yaml

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp-example

spec:

privileged: false # 不允许特权Pod

# 下面是一些必要的字段

seLinux:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

runAsUser:

rule: MustRunAsNonRoot

fsGroup:

rule: RunAsAny

volumes:

- '*'

#部署

[root@k8s-master1 psp]#kubectl apply -f psp.yaml

podsecuritypolicy.policy/psp-example configured

- 部署测试pod

[root@k8s-master1 psp]#cp pod.yaml pod2.yaml

[root@k8s-master1 psp]#vim pod2.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-psp2

spec:

containers:

- image: lizhenliang/flask-demo:root

name: web

securityContext:

runAsUser: 1000

#部署

[root@k8s-master1 psp]#kubectl apply -f pod2.yaml

pod/pod-psp2 created

#验证

[root@k8s-master1 psp]#kubectl get po|grep pod-psp2

pod-psp2 1/1 Running 0 40s

[root@k8s-master1 psp]#kubectl exec -it pod-psp2 -- bash

python@pod-psp2:/data/www$ id

uid=1000(python) gid=1000(python) groups=1000(python)

python@pod-psp2:/data/www$

#如果用命令行再创建一个pod测试

[root@k8s-master1 psp]#kubectl --as=system:serviceaccount:default:aliang6 run web22 --image=nginx

pod/web22 created

[root@k8s-master1 psp]#kubectl get po|grep web22

web22 0/1 CreateContainerConfigError 0 15s

[root@k8s-master1 psp]#kubectl describe po web22

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 32s default-scheduler Successfully assigned default/web22 to k8s-node2

Normal Pulled 28s kubelet Successfully pulled image "nginx" in 1.756553796s

Normal Pulled 25s kubelet Successfully pulled image "nginx" in 2.394107372s

Warning Failed 11s (x3 over 28s) kubelet Error: container has runAsNonRoot and image will run as root (pod: "web22_default(0db376b0-0da9-4119-97a1-091ed8798159)", container: web22)

#可以看到,这里策略起作用了,符合预期。

测试结束。😘

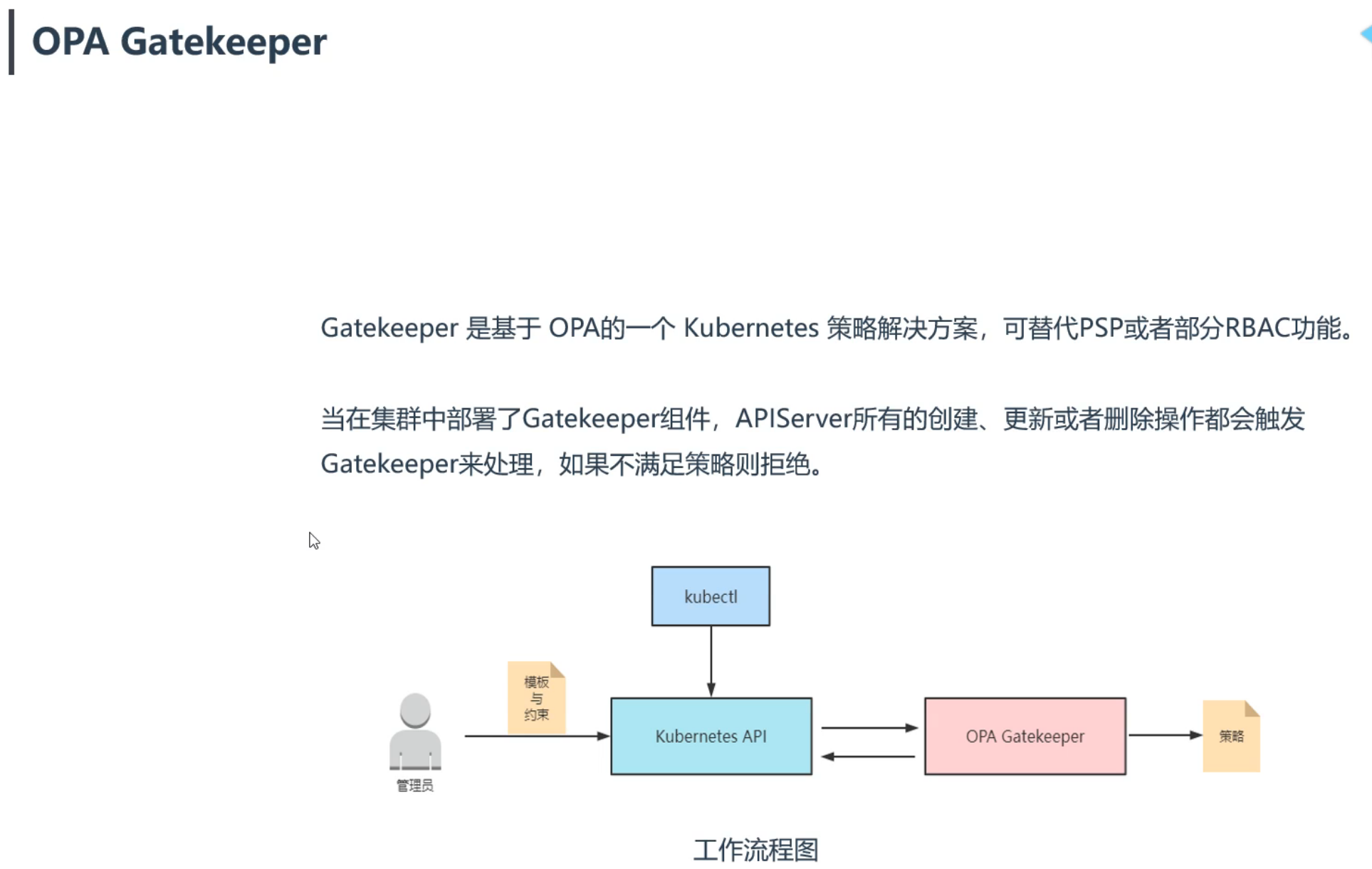

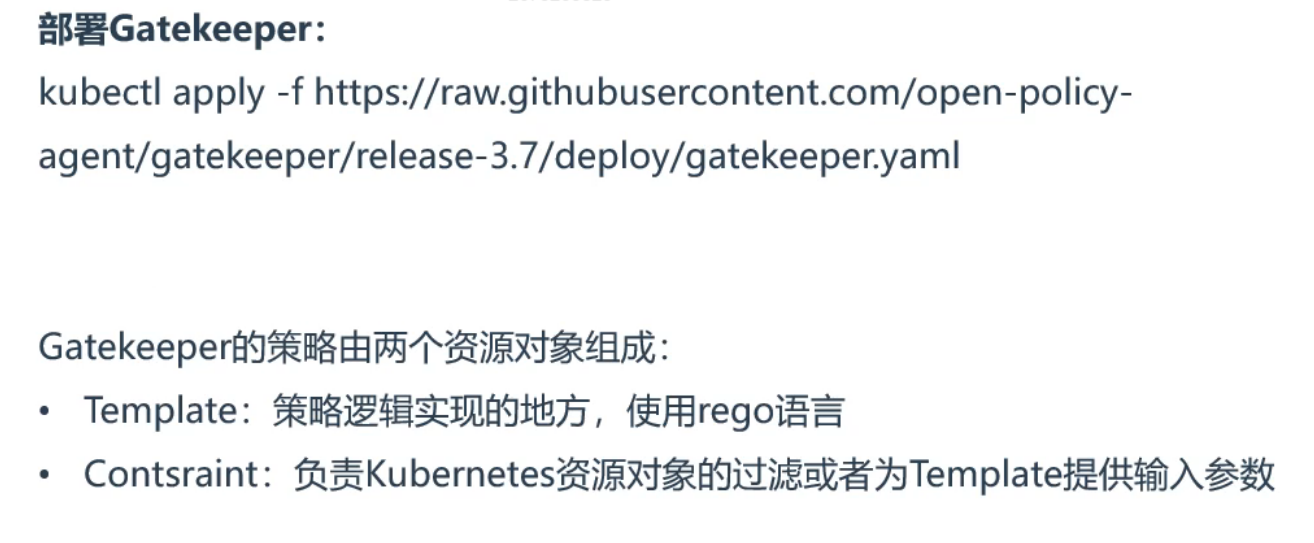

3、OPA Gatekeeper策略引擎

- OPA介绍

奔用文章: https://kubernetes.io/blog/2021/04/06/podsecuritypolicy-deprecation-past-present-and-future/

替代提案: https://github.com/kubernetes/enhancements/issues/2579

- OPA Gatekeeper

- opa Gatekeeper官方文档

https://open-policy-agent.github.io/gatekeeper/website/docs/v3.7.x/howto

https://github.com/open-policy-agent/gatekeeper

https://open-policy-agent.github.io/gatekeeper/website/docs/install/

- Open Policy Agent(opa语言)

https://www.openpolicyagent.org/docs/latest/policy-language/

部署Gatekeeper

==💘 实战:部署Gatekeeper-2023.6.1(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

https://raw.githubusercontent.com/open-policy-agent/gatekeeper/release-3.7/deploy/gatekeeper.yaml

- 实验软件

链接:https://pan.baidu.com/s/1js_WMXJuiNvXxBtBP9PBcg?pwd=0820

提取码:0820

2023.6.1-实战:部署Gatekeeper(测试成功)

1、关闭psp策略(如果存在的话)

[root@k8s-master1 ~]#vim /etc/kubernetes/manifests/kube-apiserver.yaml

将

- --enable-admission-plugins=NodeRestriction,PodSecurityPolicy

替换为

- --enable-admission-plugins=NodeRestriction

2、安装opa gatekeeper

[root@k8s-master1 ~]#mkdir opa

[root@k8s-master1 ~]#cd opa/

[root@k8s-master1 opa]#wget https://raw.githubusercontent.com/open-policy-agent/gatekeeper/release-3.7/deploy/gatekeeper.yaml

[root@k8s-master1 opa]#kubectl apply -f gatekeeper.yaml

namespace/gatekeeper-system created

resourcequota/gatekeeper-critical-pods created

customresourcedefinition.apiextensions.k8s.io/assign.mutations.gatekeeper.sh created

customresourcedefinition.apiextensions.k8s.io/assignmetadata.mutations.gatekeeper.sh created

customresourcedefinition.apiextensions.k8s.io/configs.config.gatekeeper.sh created

customresourcedefinition.apiextensions.k8s.io/constraintpodstatuses.status.gatekeeper.sh created

customresourcedefinition.apiextensions.k8s.io/constrainttemplatepodstatuses.status.gatekeeper.sh created

customresourcedefinition.apiextensions.k8s.io/constrainttemplates.templates.gatekeeper.sh created

customresourcedefinition.apiextensions.k8s.io/modifyset.mutations.gatekeeper.sh created

customresourcedefinition.apiextensions.k8s.io/mutatorpodstatuses.status.gatekeeper.sh created

customresourcedefinition.apiextensions.k8s.io/providers.externaldata.gatekeeper.sh created

serviceaccount/gatekeeper-admin created

podsecuritypolicy.policy/gatekeeper-admin created

role.rbac.authorization.k8s.io/gatekeeper-manager-role created

clusterrole.rbac.authorization.k8s.io/gatekeeper-manager-role created

rolebinding.rbac.authorization.k8s.io/gatekeeper-manager-rolebinding created

clusterrolebinding.rbac.authorization.k8s.io/gatekeeper-manager-rolebinding created

secret/gatekeeper-webhook-server-cert created

service/gatekeeper-webhook-service created

deployment.apps/gatekeeper-audit created

deployment.apps/gatekeeper-controller-manager created

poddisruptionbudget.policy/gatekeeper-controller-manager created

mutatingwebhookconfiguration.admissionregistration.k8s.io/gatekeeper-mutating-webhook-configuration created

validatingwebhookconfiguration.admissionregistration.k8s.io/gatekeeper-validating-webhook-configuration created

[root@k8s-master1 opa]#

#查看

[root@k8s-master1 opa]#kubectl get po -ngatekeeper-system

NAME READY STATUS RESTARTS AGE

gatekeeper-audit-5b869c66f9-6qvs5 1/1 Running 0 76s

gatekeeper-controller-manager-85498f495c-46ghm 1/1 Running 0 76s

gatekeeper-controller-manager-85498f495c-8hwkz 1/1 Running 0 76s

gatekeeper-controller-manager-85498f495c-xc8db 1/1 Running 0 76s

部署完成后,就可以使用下��面2个案例测试效果了。😘

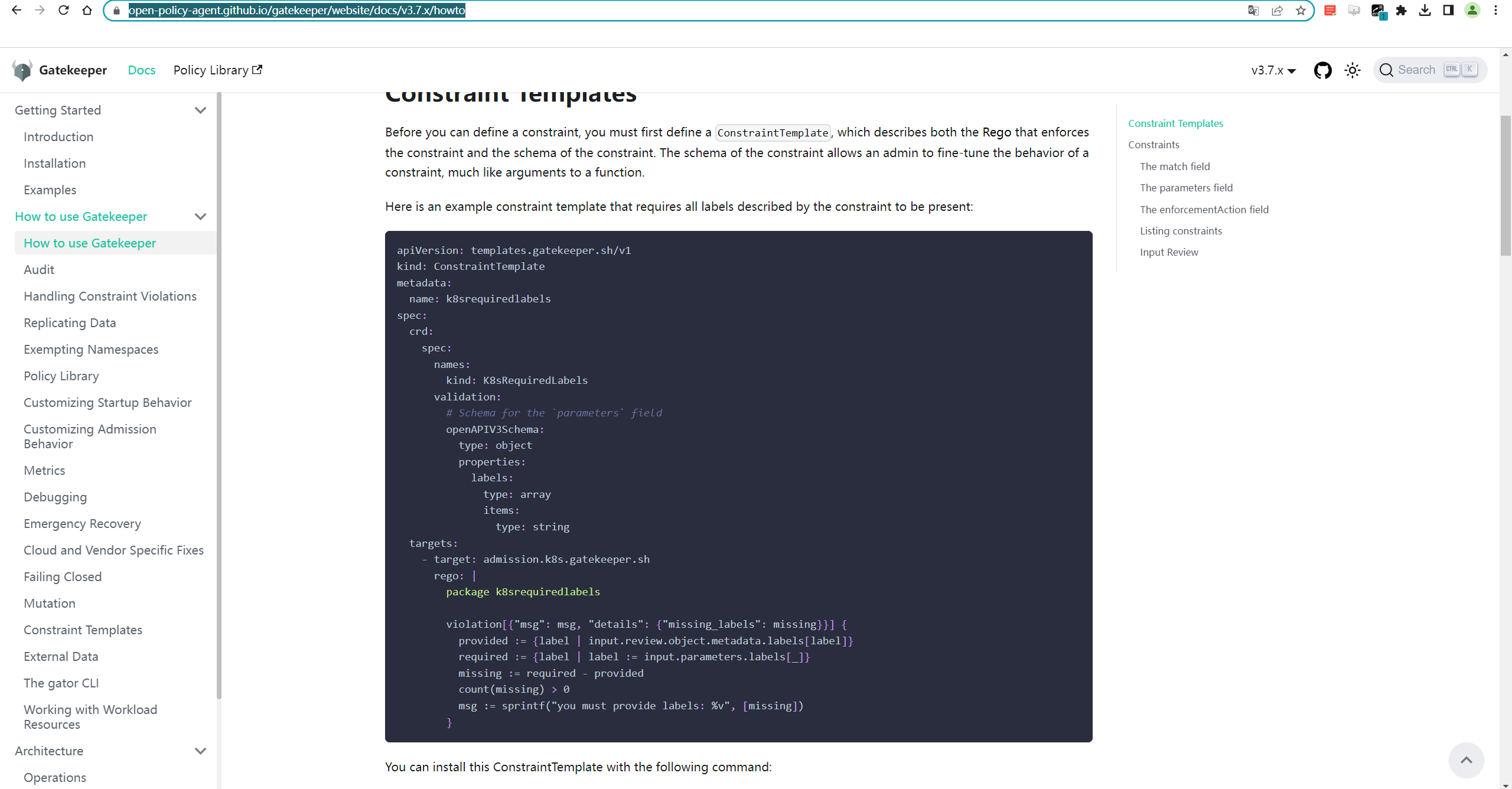

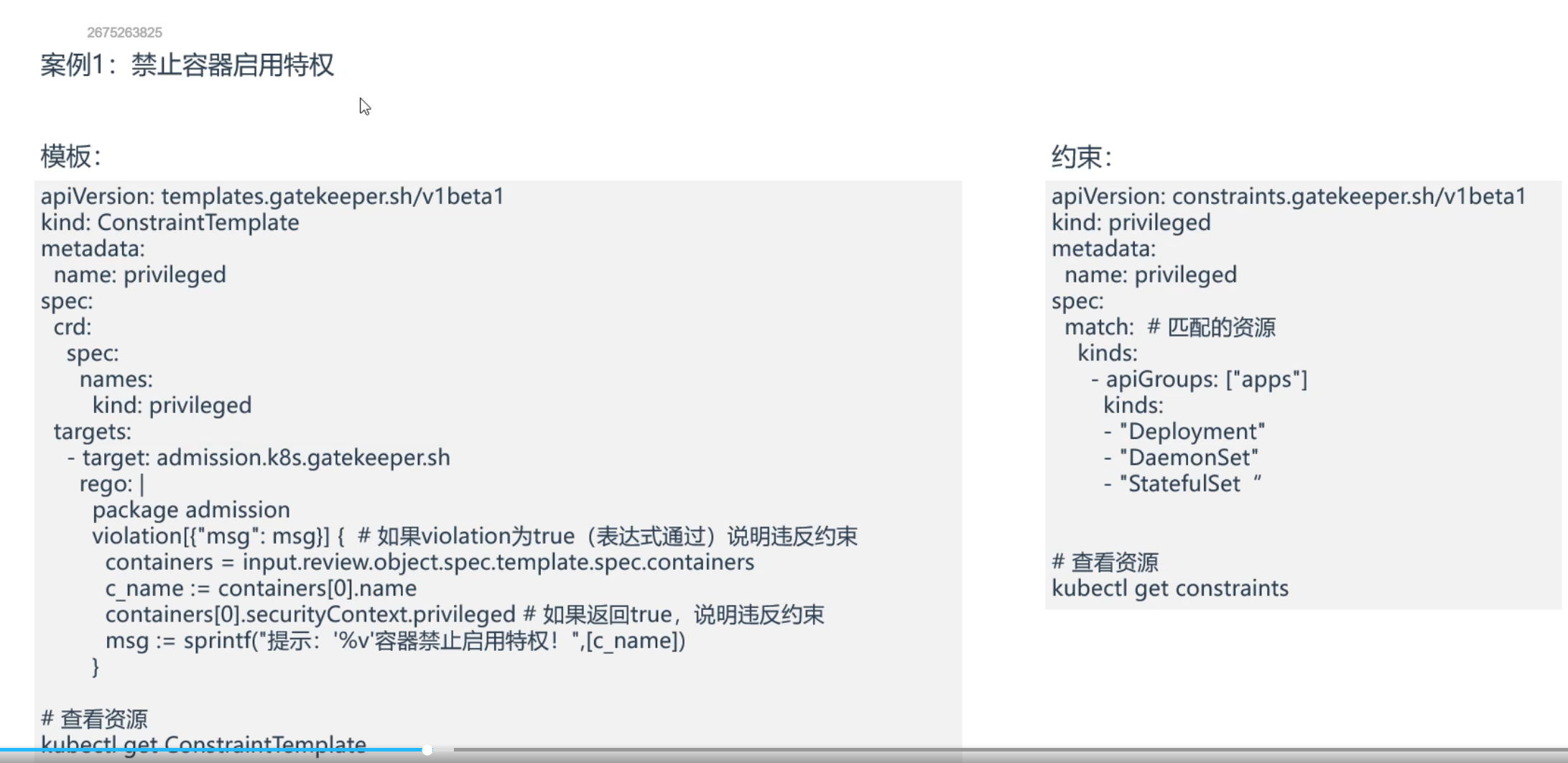

案例1:禁止容器启用特权

==💘 案例1:禁止容器启用特权-2023.6.1(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

https://raw.githubusercontent.com/open-policy-agent/gatekeeper/release-3.7/deploy/gatekeeper.yaml

- 实验软件

链接:https://pan.baidu.com/s/1rlJnp1MScKcC1G8UVaIikA?pwd=0820

提取码:0820

2023.6.1-opa-code

-

已安装好Gatekeeper。

-

部署privileged_tpl.yaml和privileged_constraints.yaml

[root@k8s-master1 opa]#vim privileged_tpl.yaml

apiVersion: templates.gatekeeper.sh/v1

kind: ConstraintTemplate

metadata:

name: privileged

spec:

crd:

spec:

names:

kind: privileged

targets:

- target: admission.k8s.gatekeeper.sh

rego: |

package admission

violation[{"msg": msg}] { #如果violation为true(表达式通过)说明违反约束

containers = input.review.object.spec.template.spec.containers

c_name := containers[0].name

containers[0].securityContext.privileged #如果返回true,说明违反约束

msg := sprintf("提示: '%v'容器禁止启用特权!", [c_name])

}

[root@k8s-master1 opa]#vim privileged_constraints.yaml

apiVersion: constraints.gatekeeper.sh/v1beta1

kind: privileged

metadata:

name: privileged

spec:

match: #匹配的资源

kinds:

- apiGroups: ["apps"]

kinds:

- "Deployment"

- "DaemonSet"

- "StatefulSet"

#部署

[root@k8s-master1 opa]#kubectl apply -f privileged_tpl.yaml

constrainttemplate.templates.gatekeeper.sh/privileged created

[root@k8s-master1 opa]#kubectl apply -f privileged_constraints.yaml

privileged.constraints.gatekeeper.sh/privileged created

#查看资源

[root@k8s-master1 opa]#kubectl get ConstraintTemplate

NAME AGE

privileged 71s

[root@k8s-master1 opa]#kubectl get constraints

NAME AGE

privileged 2m9s

- 部署测试deployment

[root@k8s-master1 opa]#kubectl create deployment web --image=nginx --dry-run=client -oyaml >deployment.yaml

[root@k8s-master1 opa]#vim deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: web

name: web61

spec:

replicas: 1

selector:

matchLabels:

app: web

template:

metadata:

labels:

app: web

spec:

containers:

- image: nginx

name: nginx

securityContext:

privileged: true

#部署

[root@k8s-master1 opa]#kubectl apply -f deployment.yaml

Error from server ([privileged] 提示: 'nginx'容器禁止启用特权!): error when creating "deployment.yaml": admission webhook "validation.gatekeeper.sh" denied the request: [privileged] 提示

: 'nginx'容器禁止启用特权!

#可以看到,部署时报错了,我们再来关闭privileged,再次部署,观察效果

[root@k8s-master1 opa]#vim deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: web

name: web61

spec:

replicas: 1

selector:

matchLabels:

app: web

template:

metadata:

labels:

app: web

spec:

containers:

- image: nginx

name: nginx

#securityContext:

# privileged: true

[root@k8s-master1 opa]#kubectl apply -f deployment.yaml

deployment.apps/web61 created

[root@k8s-master1 opa]#kubectl get deployment|grep web61

web61 1/1 1 1 18s

#关闭privileged后,就可以正常部署了

测试结束。😘

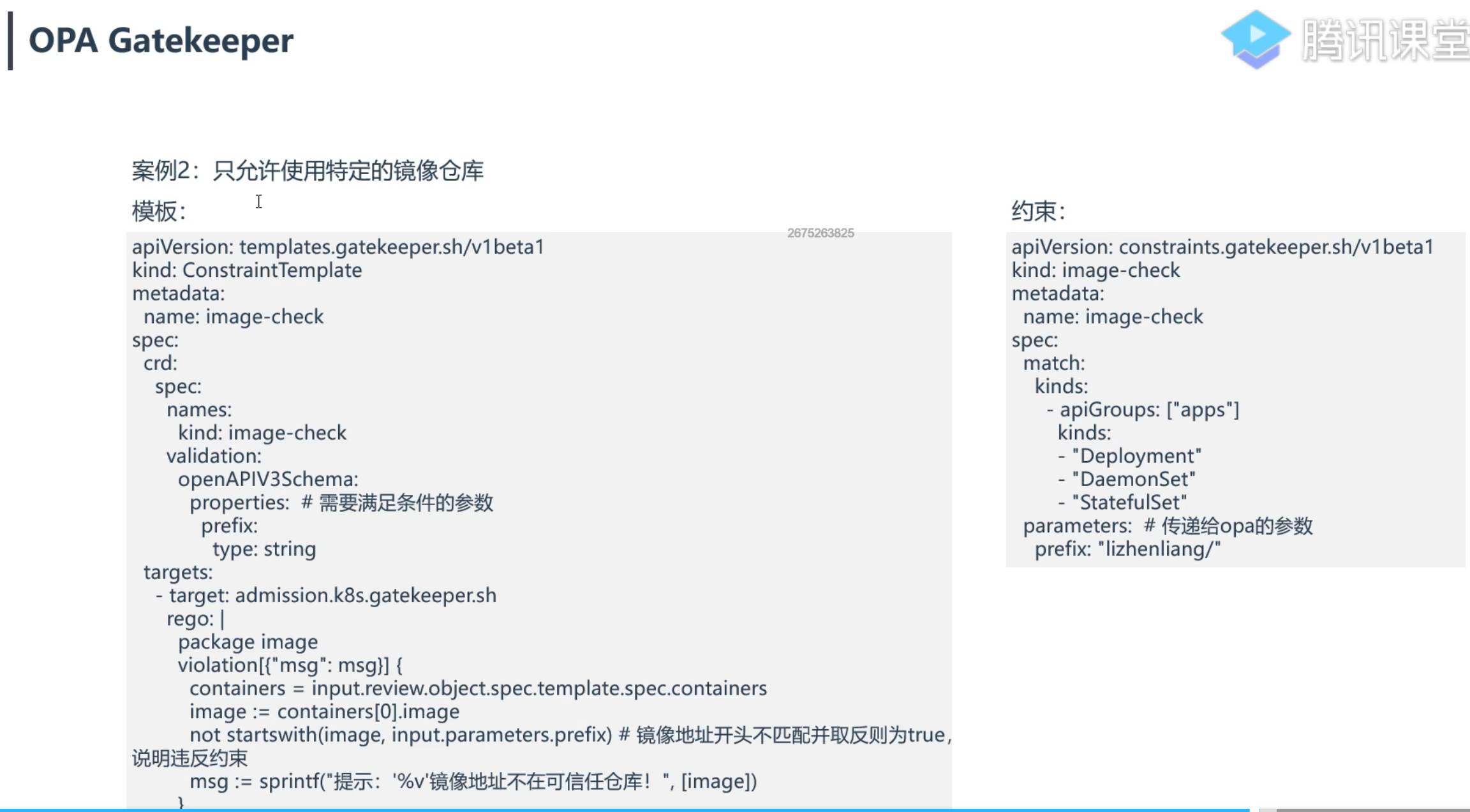

案例2:只允许使用特定的镜像仓库

==💘 案例2:只允许使用特定的镜像仓库-2023.6.1(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

https://raw.githubusercontent.com/open-policy-agent/gatekeeper/release-3.7/deploy/gatekeeper.yaml

- 实验软件

链接:https://pan.baidu.com/s/1rlJnp1MScKcC1G8UVaIikA?pwd=0820

提取码:0820

2023.6.1-opa-code

-

已安装好Gatekeeper。

-

部署资源

[root@k8s-master1 opa]#cp privileged_tpl.yaml image-check_tpl.yaml

[root@k8s-master1 opa]#cp privileged_constraints.yaml image-check_constraints.yaml

[root@k8s-master1 opa]#vim image-check_tpl.yaml

apiVersion: templates.gatekeeper.sh/v1

kind: ConstraintTemplate

metadata:

name: image-check

spec:

crd:

spec:

names:

kind: image-check

validation:

# Schema for the `parameters` field

openAPIV3Schema:

type: object

properties:

prefix:

type: string

targets:

- target: admission.k8s.gatekeeper.sh

rego: |

package image

violation[{"msg": msg}] {

containers = input.review.object.spec.template.spec.containers

image := containers[0].image

not startswith(image, input.parameters.prefix) #镜像地址开头不匹配并取反则为true,说明违反约束

msg := sprintf("提示: '%v'镜像地址不再可信任仓库", [image])

}

[root@k8s-master1 opa]#vim image-check_constraints.yaml.yaml

apiVersion: constraints.gatekeeper.sh/v1beta1

kind: image-check

metadata:

name: image-check

spec:

match:

kinds:

- apiGroups: ["apps"]

kinds:

- "Deployment"

- "DaemonSet"

- "StatefulSet"

parameters: #传递给opa的参数

prefix: "lizhenliang/"

#部署:

[root@k8s-master1 opa]#kubectl apply -f image-check_tpl.yaml

constrainttemplate.templates.gatekeeper.sh/image-check created

[root@k8s-master1 opa]#kubectl apply -f image-check_constraints.yaml.yaml

image-check.constraints.gatekeeper.sh/image-check created

#查看

[root@k8s-master1 opa]#kubectl get constrainttemplate

NAME AGE

image-check 71s

privileged 59m

[root@k8s-master1 opa]#kubectl get constraints

NAME AGE

image-check.constraints.gatekeeper.sh/image-check 70s

NAME AGE

privileged.constraints.gatekeeper.sh/privileged 59m

- 创建测试pod

[root@k8s-master1 opa]#kubectl create deployment web666 --image=nginx

error: failed to create deployment: admission webhook "validation.gatekeeper.sh" denied the request: [image-check] 提示: 'nginx'镜像地址不再可信任仓库

[root@k8s-master1 opa]#kubectl create deployment web666 --image=lizhenliang/nginx

deployment.apps/web666 created

符合预期。😘

4、Secret存储敏感数据

见其它md。

5、安全沙箱运行容器:gVisor介绍

gVisor介绍

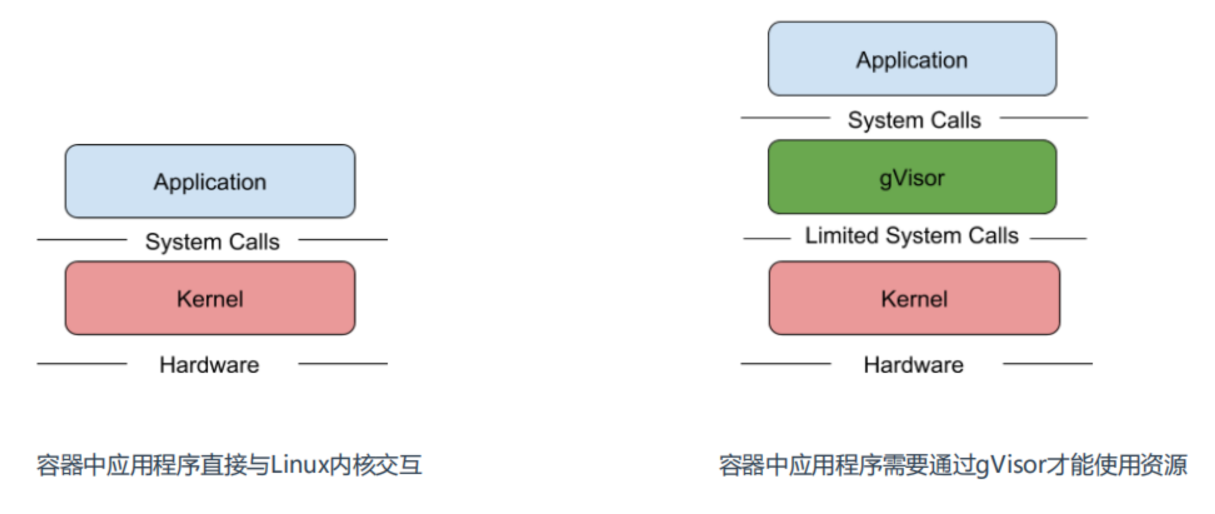

所知,容器的应用程序可以直接访问Linux内核的系统调用,容器在安全隔离上还是比较弱,虽然内核在不断地增强自身的安全特性,但由于内核自身代码极端复杂,CVE 漏洞层出不穷。所以要想减少这方面安全风险,就是做好安全隔离,阻断容器内程序对物理机内核的依赖。

Google开源的一种gVisor容器沙箱技术就是采用这种思路,gVisor隔离容器内应用和内核之间访问,提供了大部分Linux内核的系统调用,巧妙的将容器内进程的系统调用转化为对gVisor的访问。

gVisor兼容OCI,与Docker和K8s无缝集成,很方面使用。

项目地址:https://github.com/google/gvisor

gVisor架构

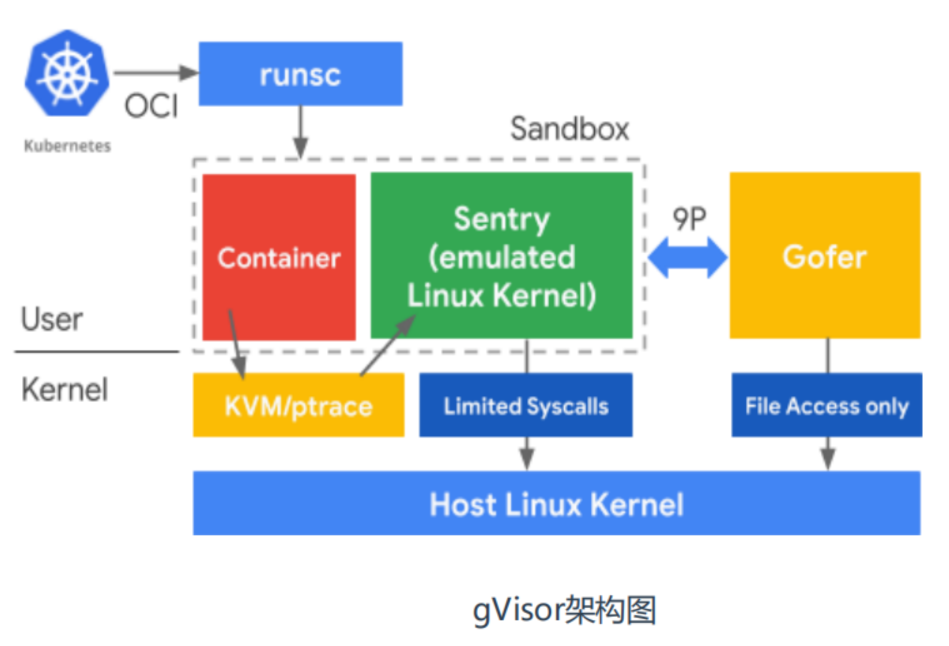

gVisor 由 3 个组件构成: • Runsc 是一种 Runtime 引擎,负责容器的创建与销毁。 • Sentry 负责容器内程序的系统调用处理。 • Gofer 负责文件系统的操作代理,IO 请求都会由它转接到 Host 上。

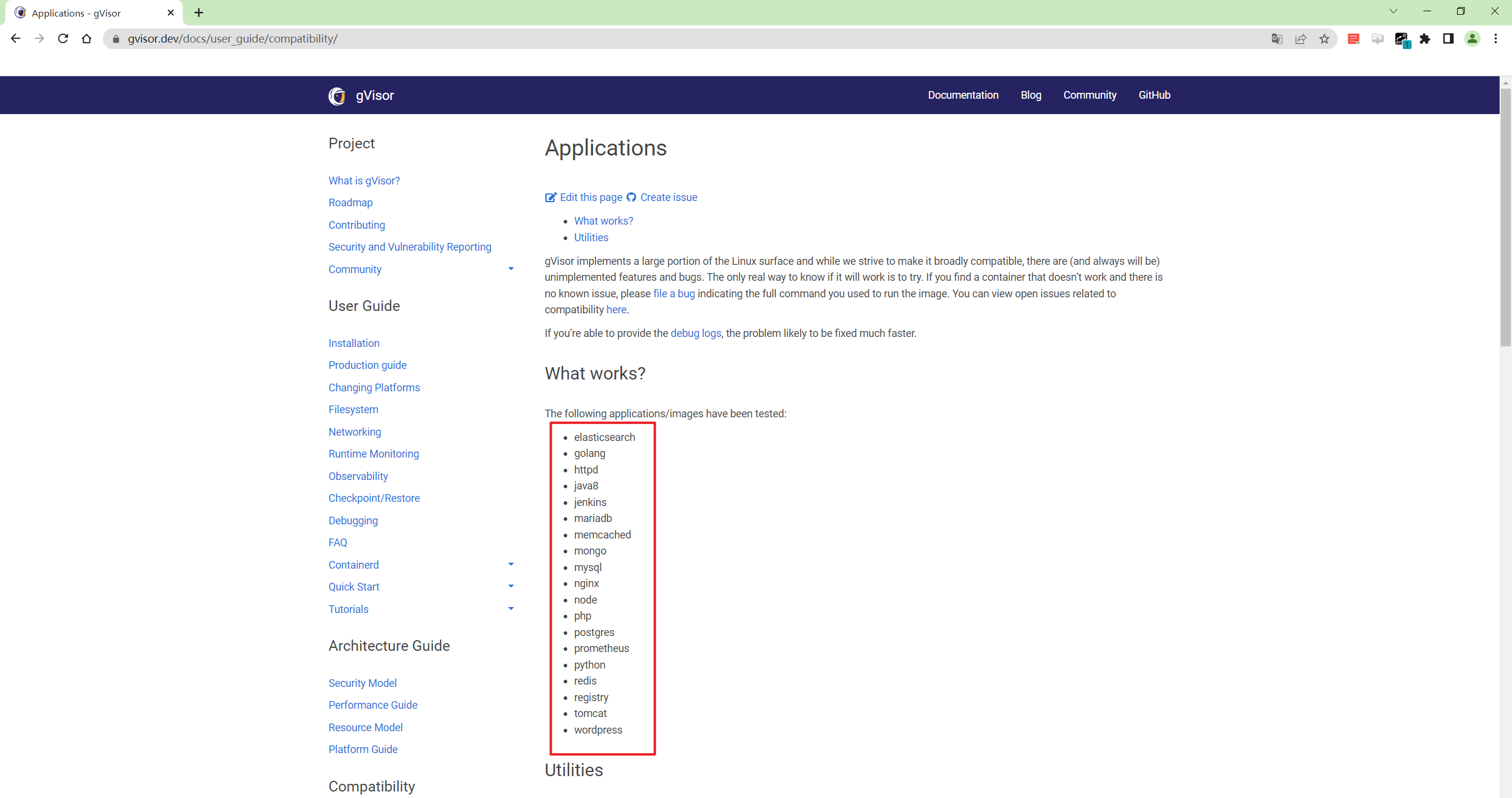

- 已经测试过的应用和工具

https://gvisor.dev/docs/user_guide/compatibility/

案例1:gVisor与Docker集成

==💘 实战:gVisor与Docker集成-2023.6.2(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

- 实验软件

链接:https://pan.baidu.com/s/1xsLYDDsdonQzFUwWCPCdhg?pwd=0820

提取码:0820

2023.6.2-gvisor

- 基础环境

升级内核

gVisor内核要求:Linux 3.17+

如果用的是CentOS7则需要升级内核,Ubuntu不需要。

CentOS7内核升级步骤:

#查看当前内核

[root@k8s-node1 ~]#uname -r

3.10.0-957.el7.x86_64

#升级内核

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-2.el7.elrepo.noarch.rpm

yum --enablerepo=elrepo-kernel install kernel-ml-devel kernel-ml –y

grub2-set-default 0

reboot

#确认是否升级成功

[root@k8s-node1 ~]#uname -r

6.3.5-1.el7.elrepo.x86_64

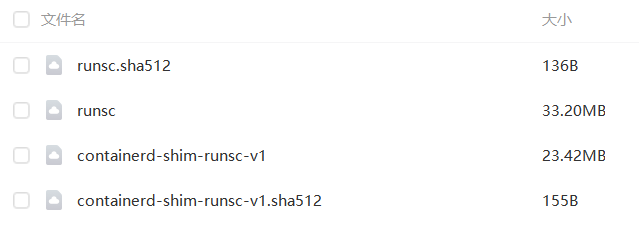

1、准备gVisor二进制文件

#这里使用提供的软件包就好

[root@k8s-node1 ~]#mkdir gvisor

[root@k8s-node1 ~]#cd gvisor/

[root@k8s-node1 gvisor]#unzip gvisor.zip

Archive: gvisor.zip

inflating: runsc.sha512

inflating: runsc

inflating: containerd-shim-runsc-v1

inflating: containerd-shim-runsc-v1.sha512

[root@k8s-node1 gvisor]#chmod +x containerd-shim-runsc-v1 runsc

[root@k8s-node1 gvisor]#mv containerd-shim-runsc-v1 runsc /usr/local/bin/

#验证

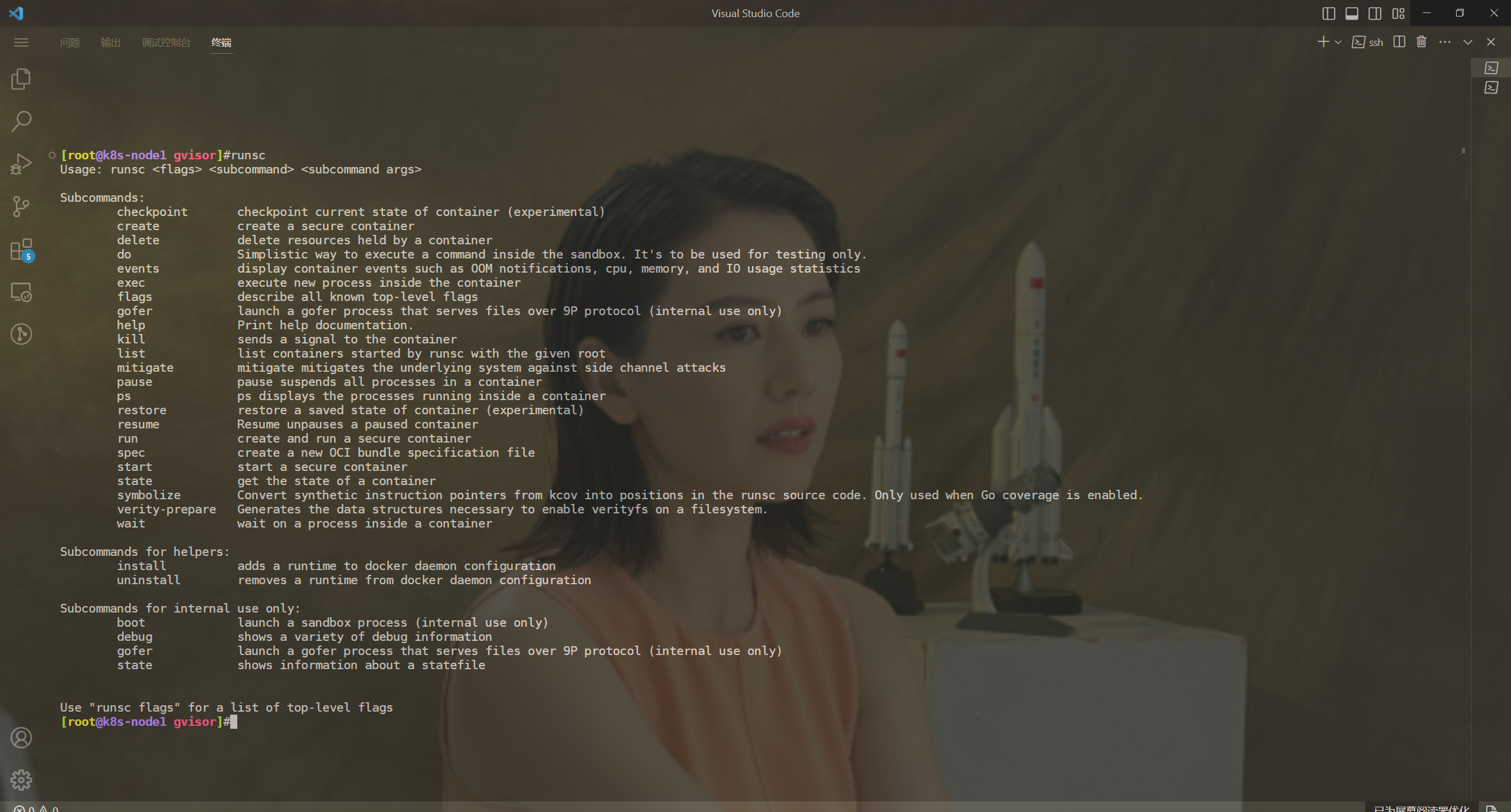

[root@k8s-node1 gvisor]#runsc

Usage: runsc <flags> <subcommand> <subcommand args>

Subcommands:

checkpoint checkpoint current state of container (experimental)

create create a secure container

delete delete resources held by a container

do Simplistic way to execute a command inside the sandbox. It's to be used for testing only.

events display container events such as OOM notifications, cpu, memory, and IO usage statistics

exec execute new process inside the container

……

2、Docker配置使用gVisor

[root@k8s-node1 gvisor]#cat /etc/docker/daemon.json

{

"registry-mirrors":["https://dockerhub.azk8s.cn","http://hub-mirror.c.163.com","http://qtid6917.mirror.aliyuncs.com"]

}

[root@k8s-node1 gvisor]#runsc install

2023/06/02 10:21:25 Added runtime "runsc" with arguments [] to "/etc/docker/daemon.json".

[root@k8s-node1 gvisor]#cat /etc/docker/daemon.json

{

"registry-mirrors": [

"https://dockerhub.azk8s.cn",

"http://hub-mirror.c.163.com",

"http://qtid6917.mirror.aliyuncs.com"

],

"runtimes": {

"runsc": {

"path": "/usr/local/bin/runsc"

}

}

}

[root@k8s-node1 gvisor]#systemctl restart docker

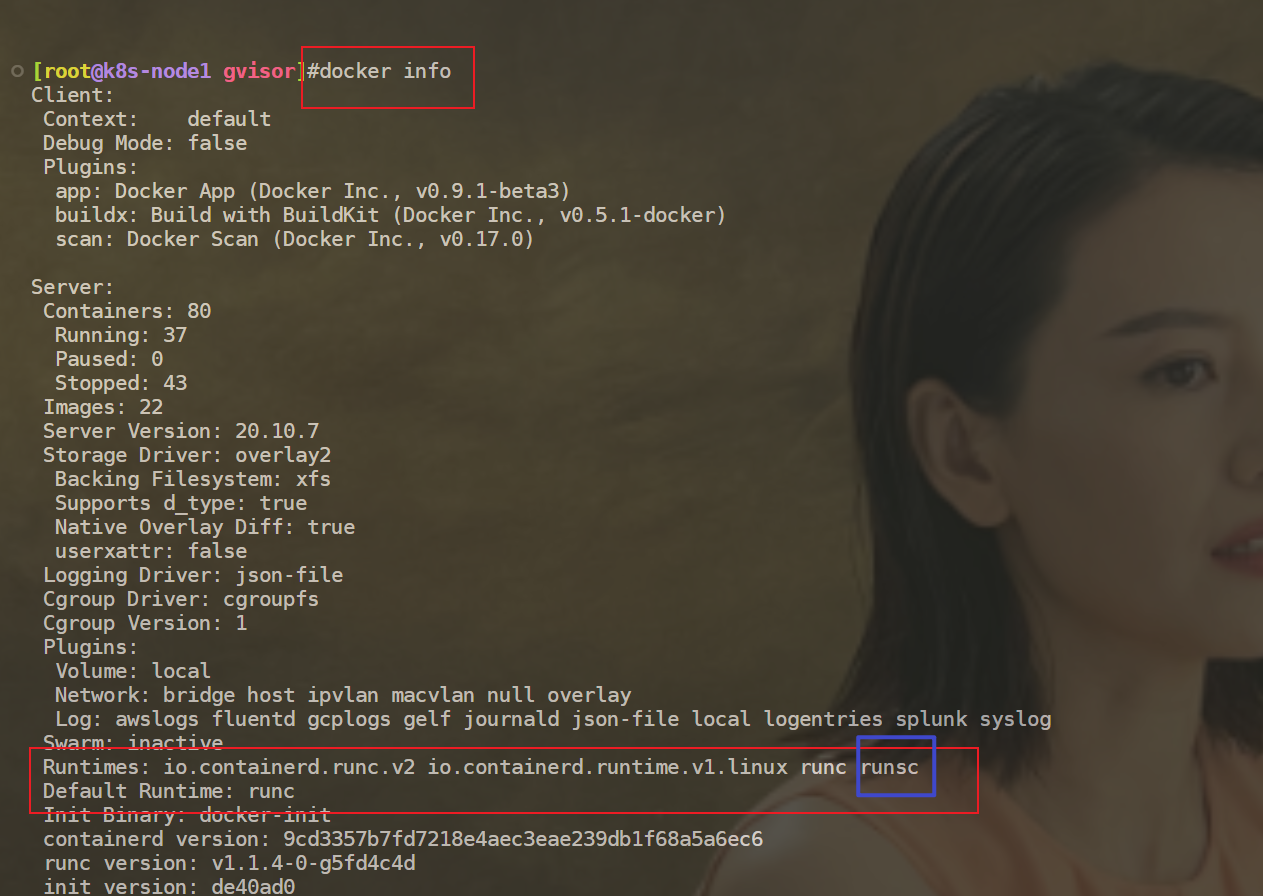

3、验证

docker info #可以看到,容器运行时这里会多了一个runsc

使用gvisor运行容器:

[root@k8s-node1 gvisor]#docker run -d --name=web-normal nginx

f826403374fa703f9715bfa218ea405bbbbf0cf765ac72defc7f94934cb7e0cf

[root@k8s-node1 gvisor]#docker run -d --name=web-gvisor --runtime=runsc nginx

c1eb1405fd9a09db41525ac68061f8c324dd6a3e5ae9d90828c7d257d3ab3294

[root@k8s-node1 gvisor]#docker exec web-normal uname -r

6.3.5-1.el7.elrepo.x86_64

[root@k8s-node1 gvisor]#docker exec web-gvisor uname -r

4.4.0

[root@k8s-node1 gvisor]#uname -r

6.3.5-1.el7.elrepo.x86_64

#可以看大使用runsc创建的容器内核是4.4.0,而默认使用runc创建的容器内核使用的是宿主机内核6.3.5-1.

#另外,也可使用如下命令来查看log

[root@k8s-node1 gvisor]#docker run --runtime=runsc nginx dmesg

[ 0.000000] Starting gVisor...

[ 0.130598] Searching for socket adapter...

[ 0.134223] Granting licence to kill(2)...

[ 0.500262] Digging up root...

[ 0.952825] Reticulating splines...

[ 1.112800] Waiting for children...

[ 1.355414] Committing treasure map to memory...

[ 1.740439] Conjuring /dev/null black hole...

[ 2.014772] Gathering forks...

[ 2.079167] Feeding the init monster...

[ 2.452438] Generating random numbers by fair dice roll...

[ 2.566791] Ready!

[root@k8s-node1 gvisor]

测试结束。😘

- 参考文档

参考文档:https://gvisor.dev/docs/user_guide/install/

案例2:gVisor与Containerd集成

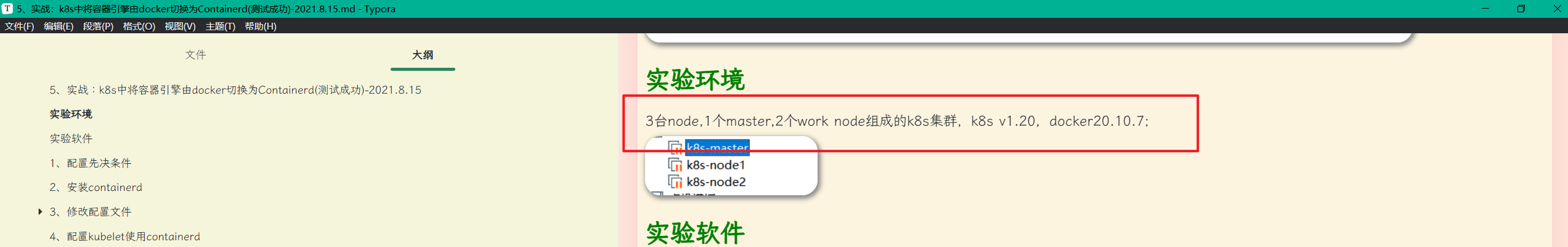

==💘 实战:gVisor与Containerd集成-2023.6.2(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

containerd://1.4.11

- 实验软件

链接:https://pan.baidu.com/s/1ekAmnKS_iLjaGgNFxjZoXg?pwd=0820

提取码:0820

2023.6.3-gVisor与Containerd集成-code

这里是k8s(docker环境)其中一个宿主机切换到Containerd容器引擎方法:

这里的步骤和之前的文档一样,只是/etc/containerd/config.toml里面多了一个runsc的配置,其余都一样。

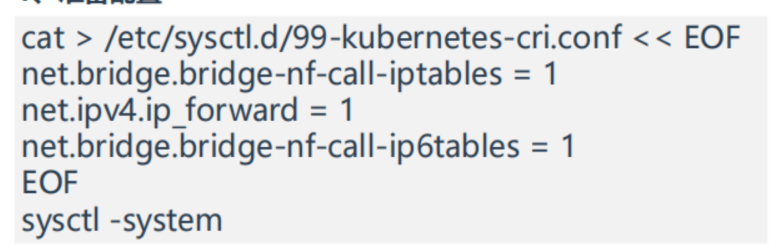

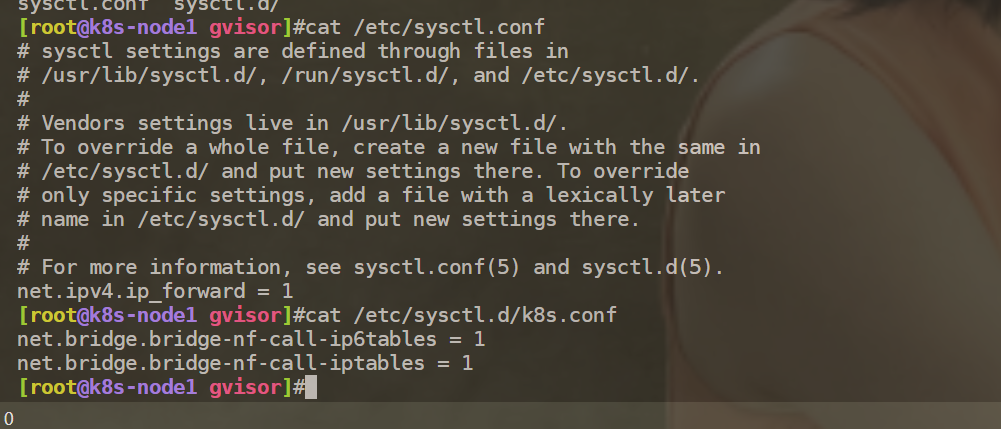

1、准备配置

这一部分在k8s安装时已经配置了的:(这里省略)

2、安装

本次安装cri-containerd-cni-1.4.11-linux-amd64.tar.gz https://github.com/containerd/containerd/releases/tag/v1.4.11

tar -C / -xzf cri-containerd-cni-1.4.11-linux-amd64.tar.gz

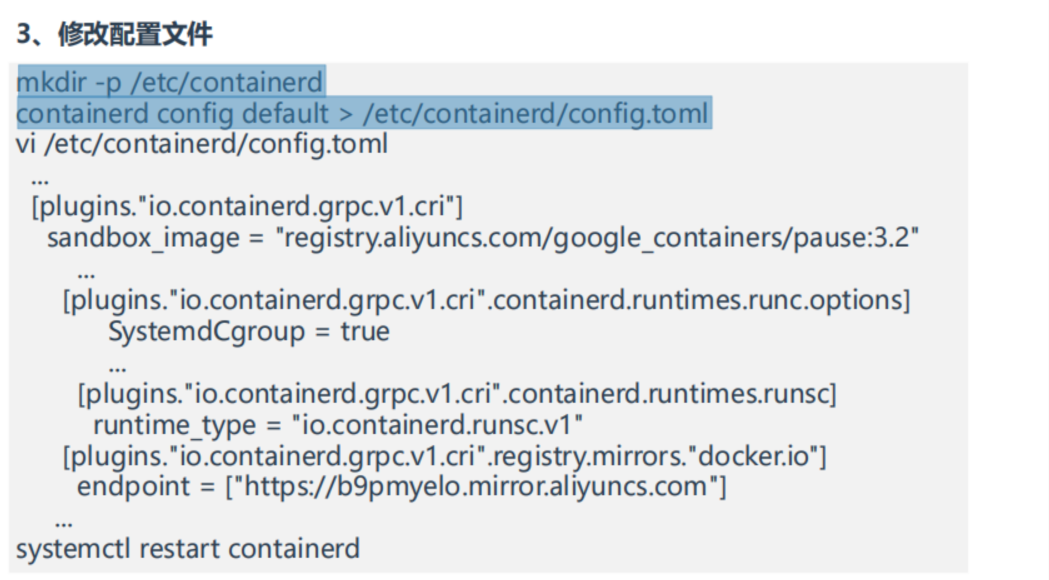

3、修改配置文件 • pause镜像地址 • Cgroup驱动改为systemd • 增加runsc容器运行时 • 配置docker镜像加速器

mkdir -p /etc/containerd

containerd config default > /etc/containerd/config.toml

vim /etc/containerd/config.toml

vi /etc/containerd/config.toml

...

[plugins."io.containerd.grpc.v1.cri"]

sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.2"

...

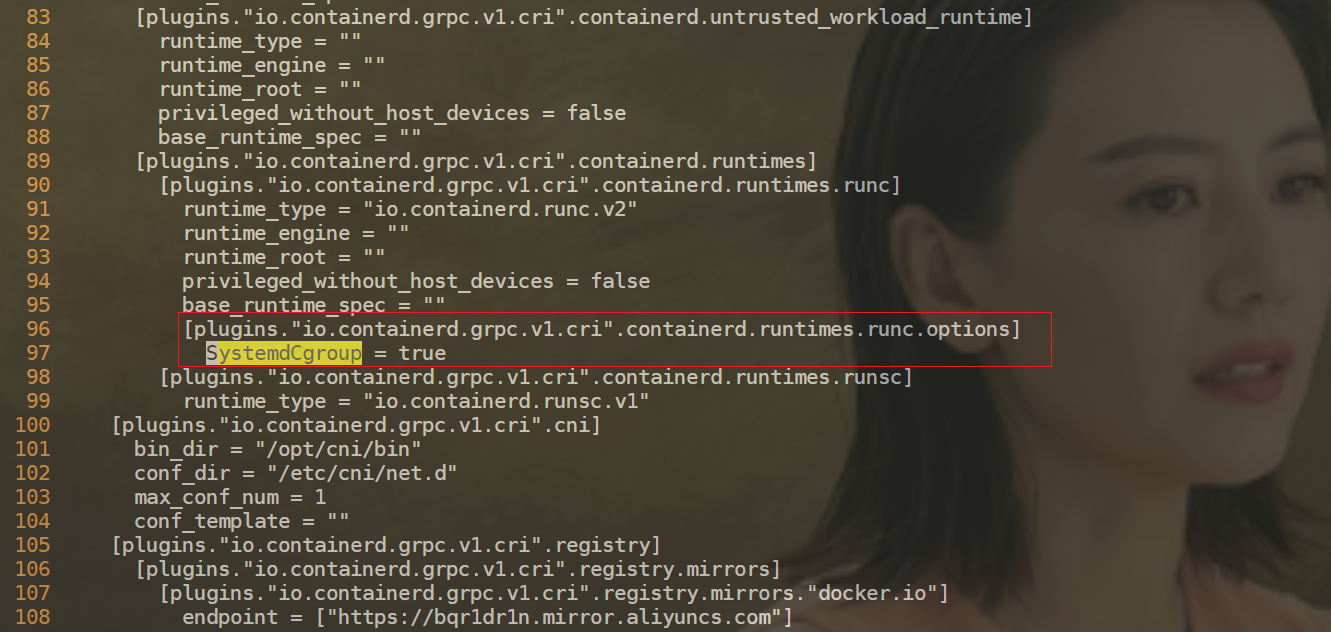

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

SystemdCgroup = true

...

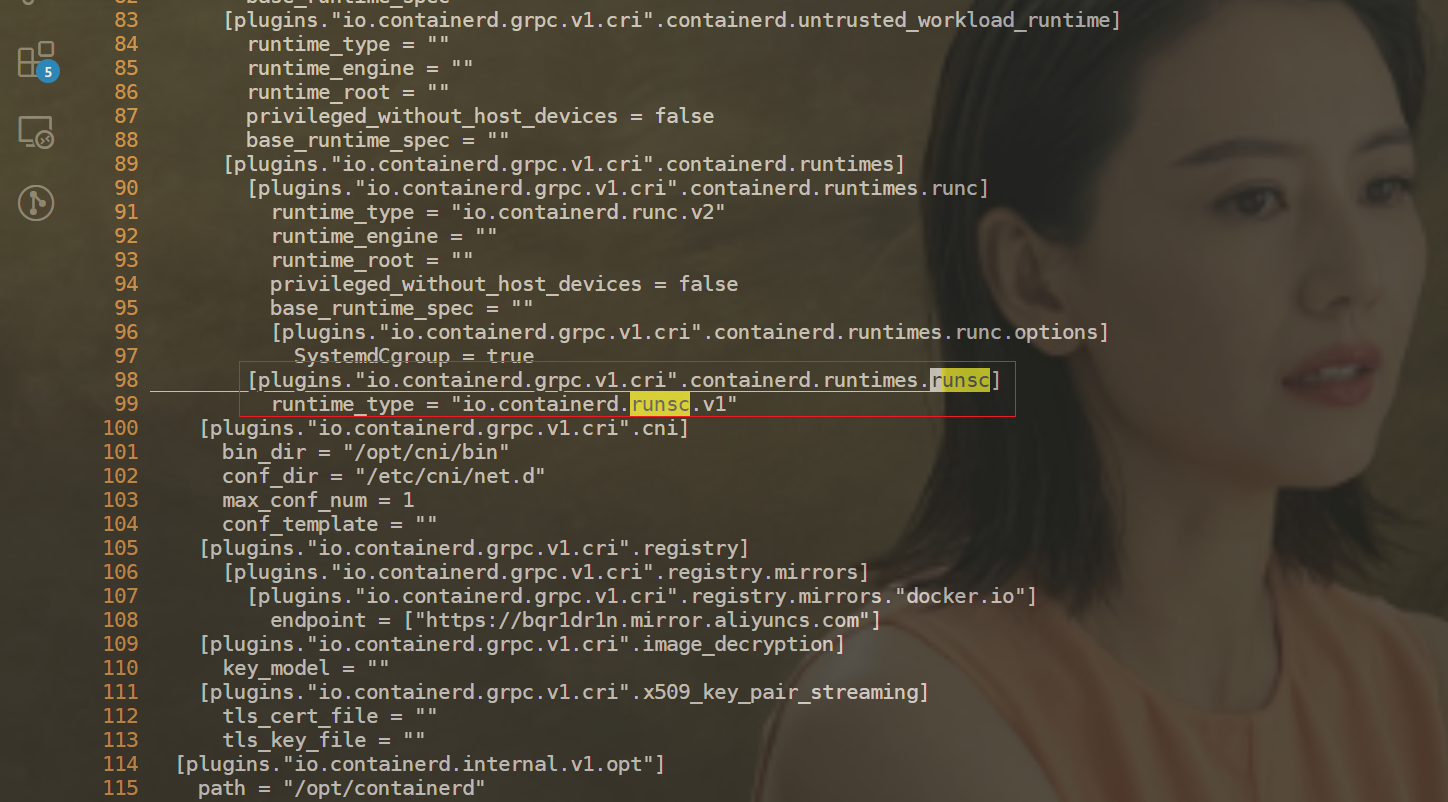

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runsc]

runtime_type = "io.containerd.runsc.v1"

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]

endpoint = ["https://b9pmyelo.mirror.aliyuncs.com"]

...

#关闭docker

[root@k8s-node1 gvisor]#systemctl stop docker

Warning: Stopping docker.service, but it can still be activated by:

docker.socket

[root@k8s-node1 gvisor]#systemctl stop docker.socket

[root@k8s-node1 gvisor]#docker info

Client:

Context: default

Debug Mode: false

Plugins:

app: Docker App (Docker Inc., v0.9.1-beta3)

buildx: Build with BuildKit (Docker Inc., v0.5.1-docker)

scan: Docker Scan (Docker Inc., v0.17.0)

Server:

ERROR: Cannot connect to the Docker daemon at unix:///var/run/docker.sock. Is the docker daemon running?

errors pretty printing info

[root@k8s-node1 gvisor]#ps -ef|grep docker

root 35418 26329 0 11:19 pts/0 00:00:00 grep --color=auto docker

[root@k8s-node1 ~]#containerd -v

containerd github.com/containerd/containerd v1.6.10 770bd0108c32f3fb5c73ae1264f7e503fe7b2661

[root@k8s-node1 ~]#runc -v

runc version 1.1.4

commit: v1.1.4-0-g5fd4c4d1

spec: 1.0.2-dev

go: go1.18.8

libseccomp: 2.5.1

#重启containerd

[root@k8s-node1 gvisor]#systemctl restart containerd

4、配置kubelet使用containerd

[root@k8s-node1 gvisor]#vi /etc/sysconfig/kubelet

将

KUBELET_EXTRA_ARGS=

替换为

KUBELET_EXTRA_ARGS=--container-runtime=remote --container-runtime-endpoint=unix:///run/containerd/containerd.sock --cgroup-driver=systemd

[root@k8s-node1 gvisor]#systemctl restart kubelet

5、验证

目前切换,不是平滑的切换。 上面的pod会被重建的。

配置完成。😘

案例3:K8s使用gVisor运行容器

RuntimeClass 是一个用于选择容器运行时配置的特性,容器运行时配置��用于运行 Pod 中的容器。

==💘 实战:K8s使用gVisor运行容器-2023.6.3(测试成功)==

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.20.0

docker://20.10.7

- 实验软件

链接:https://pan.baidu.com/s/1kmPpRWyWsvk0k8FM2jjdJw?pwd=0820

提取码:0820

2023.6.3-K8s使用gVisor运行容器-code

1、创建RuntimeClass

[root@k8s-master1 ~]#mkdir k8s-gvisor

[root@k8s-master1 ~]#cd k8s-gvisor/

[root@k8s-master1 k8s-gvisor]#vim runtimeclass.yaml

apiVersion: node.k8s.io/v1 # RuntimeClass 定义于 node.k8s.io API 组

kind: RuntimeClass

metadata:

name: gvisor # 用来引用 RuntimeClass 的名字

handler: runsc # 对应的 CRI 配置的名称

#部署

[root@k8s-master1 k8s-gvisor]#kubectl apply -f runtimeclass.yaml

runtimeclass.node.k8s.io/gvisor created

[root@k8s-master1 k8s-gvisor]#kubectl get runtimeclasses

NAME HANDLER AGE

gvisor runsc 13s

2、创建Pod测试gVisor

[root@k8s-master1 k8s-gvisor]#vim pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: nginx-gvisor

spec:

runtimeClassName: gvisor

nodeName: "k8s-node1" #注意:这里要加上nodeName,要保证能调度到开启了runsc容器运行时的节点!

containers:

- name: nginx

image: nginx

#部署

[root@k8s-master1 k8s-gvisor]#kubectl apply -f pod.yaml

pod/nginx-gvisor created

3、验证

[root@k8s-master1 k8s-gvisor]#kubectl get po -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-gvisor 1/1 Running 0 11m 10.244.36.101 k8s-node1 <none> <none>

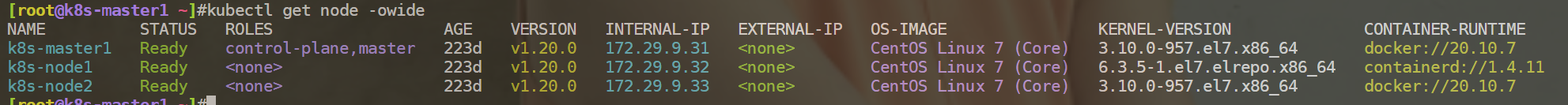

[root@k8s-master1 k8s-gvisor]#kubectl get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-master1 Ready control-plane,master 223d v1.20.0 172.29.9.31 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://20.10.7

k8s-node1 Ready <none> 223d v1.20.0 172.29.9.32 <none> CentOS Linux 7 (Core) 6.3.5-1.el7.elrepo.x86_64 containerd://1.4.11

k8s-node2 Ready <none> 223d v1.20.0 172.29.9.33 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://20.10.7

[root@k8s-master1 k8s-gvisor]#kubectl exec nginx-gvisor -- dmesg

[ 0.000000] Starting gVisor...

[ 0.281926] Creating bureaucratic processes...

[ 0.750139] Mounting deweydecimalfs...

[ 0.931675] Forking spaghetti code...

[ 1.122117] Creating cloned children...

[ 1.339021] Recruiting cron-ies...

[ 1.589506] Adversarially training Redcode AI...

[ 1.615452] Consulting tar man page...

[ 1.899674] Waiting for children...

[ 2.110548] Committing treasure map to memory...

[ 2.488117] Feeding the init monster...

[ 2.746305] Ready!

符合预期,可以看到次pod使用乐然gvisor作为容器运行时。

测试结束。😘

FAQ

汇总

多容器运行时

关于我

我的博客主旨:

- 排版美观,语言精炼;

- 文档即手册,步骤明细,拒绝埋坑,提供源码;

- 本人实战文档都是亲测成功的,各位小伙伴在实际操作过程中如有什么疑问,可随时联系本人帮您解决问题,让我们一起进步!

🍀 微信二维码 x2675263825 (舍得), qq:2675263825。

🍀 微信公众号 《云原生架构师实战》

🍀 语雀

https://www.yuque.com/xyy-onlyone

🍀 csdn https://blog.csdn.net/weixin_39246554?spm=1010.2135.3001.5421

🍀 知乎 https://www.zhihu.com/people/foryouone

最后

好了,关于本次就到这里了,感谢大家阅读,最后祝大家生活快乐,每天都过的有意义哦,我们下期见!